Chat GPT-5.4: A Closer Look at OpenAI’s New Model

OpenAI released Chat GPT-5.4 on March 5, 2026, calling it the company’s most capable and efficient frontier model for professional work. The update landed just two days after GPT-5.3 Instant, making this one of the fastest back-to-back releases in the company’s history. It is available in three configurations: a standard version for general use, GPT-5.4 Thinking for extended chain-of-thought reasoning, and GPT-5.4 Pro for the most demanding workloads. The rollout covers ChatGPT, the API, and Codex for Plus, Team, Pro, and Enterprise subscribers.

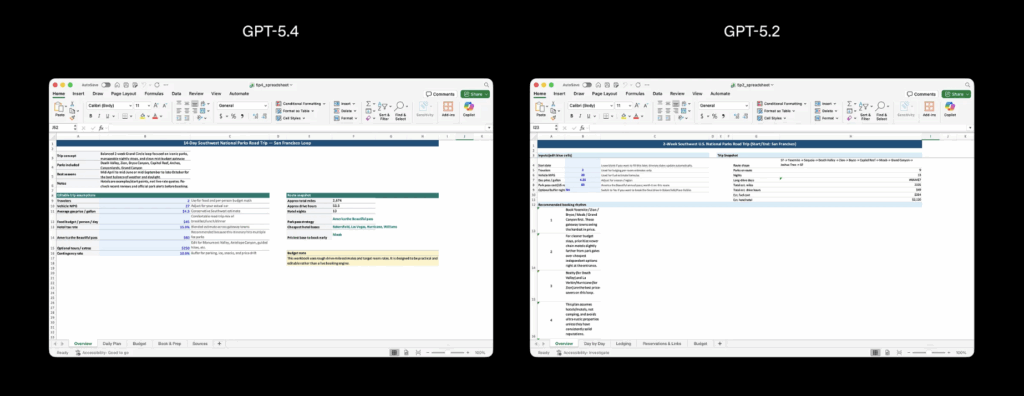

The previous flagship, GPT-5.2 Thinking, will remain accessible in the Legacy Models section for three months before being retired on June 5, 2026. Chat GPT-5.4 absorbs the coding capabilities of GPT-5.3-Codex and adds improvements across document understanding, tool use, spreadsheet workflows, and multimodal tasks.

A Million-Token Context Window and Better Token Efficiency

For API users, GPT-5.4 supports context windows of up to 1 million tokens. That is by far the largest context window OpenAI has ever offered, and it allows developers to analyze entire codebases, long document collections, or extended agent trajectories within a single request. Experimental support for the 1M context window is also available inside Codex.

Alongside the expanded context, OpenAI reports that the model is significantly more token-efficient than its predecessor. It uses fewer tokens to solve equivalent problems, which could offset its slightly higher per-token pricing for many use cases.

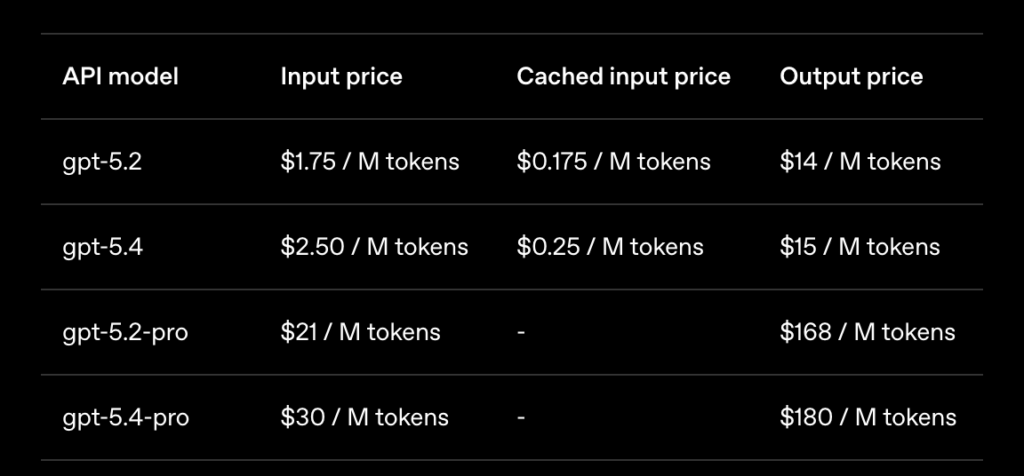

The official API pricing for GPT-5.4 is set at $2.50 per million input tokens and $15.00 per million output tokens, with cached inputs at $0.25 per million. These are OpenAI’s highest per-token prices yet for a standard model, but the efficiency gains may reduce total costs in practice.

Native Computer Use and Upgraded Vision

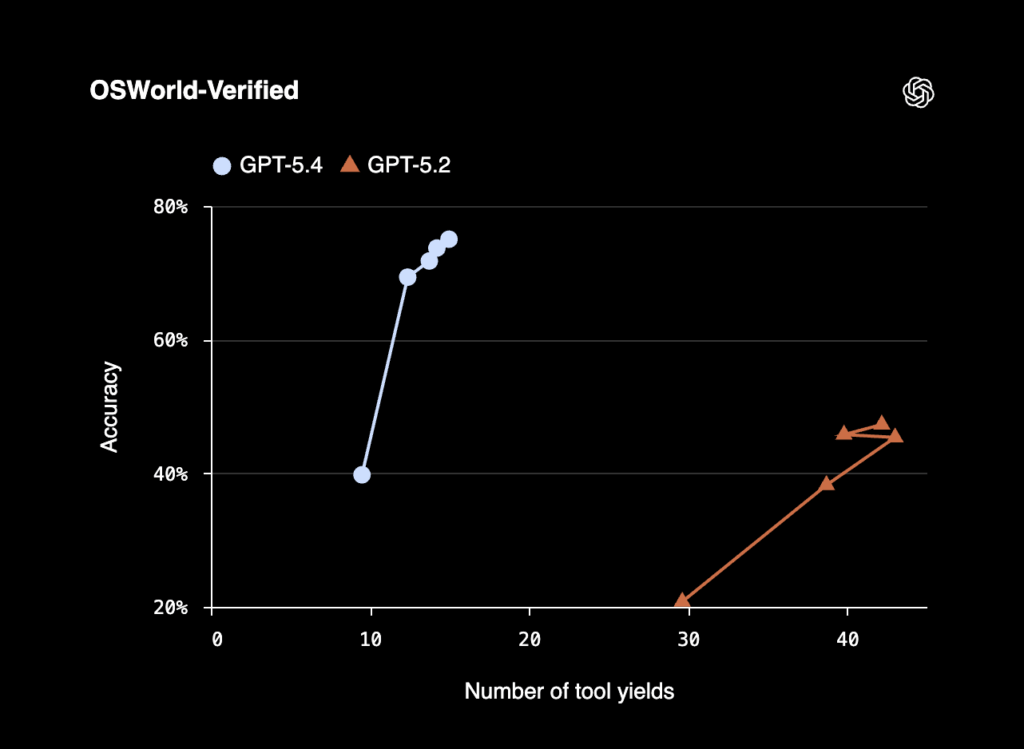

Chat GPT-5.4 is the first mainline OpenAI model with built-in computer use capabilities. It can interact directly with software through screenshots, mouse, and keyboard commands, enabling agents to complete, verify, and fix tasks in a build-run-verify-fix loop. On the OSWorld-Verified benchmark, which measures desktop navigation through screenshots and input actions, the model achieved a 75.0% success rate. That result exceeds reported human performance at 72.4% and represents a significant jump from GPT-5.2’s 47.3%.

Visual understanding also received a notable upgrade. A new original image input detail level supports full-fidelity perception up to 10.24 million pixels or a 6000-pixel maximum dimension. The existing high detail level was also expanded to support up to 2.56 million pixels. In early testing with API users, OpenAI observed meaningful gains in localization ability, image understanding, and click accuracy when using the original or high detail settings.

Tool Search and Agentic Workflows

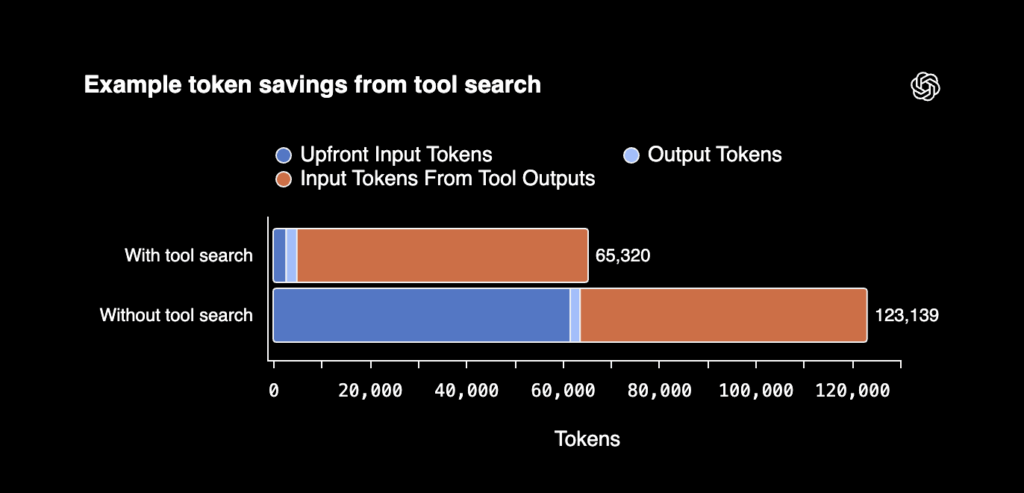

One of the more consequential changes for developers is Tool Search. Previously, when a model was given access to tools, all tool definitions had to be included upfront in the prompt. For systems with many tools, this could add thousands of tokens to every request, slowing responses and increasing cost. With Tool Search, GPT-5.4 instead receives a lightweight list of available tools and can look up specific definitions only when needed. OpenAI describes this as a way to dramatically reduce token usage in tool-heavy workflows while preserving cache efficiency.

The model also introduces a preamble feature in ChatGPT. For longer, more complex queries, GPT-5.4 Thinking now outlines its approach before diving in. Users can adjust the model’s direction mid-response, making it possible to guide the output without starting over. This feature is currently available on chatgpt.com and the Android app, with iOS support coming soon.

Fewer Hallucinations, Stronger Benchmarks

On the factual accuracy front, OpenAI reports that Chat GPT-5.4 is its most reliable model to date. Individual claims are 33% less likely to be false and full responses are 18% less likely to contain any errors, relative to GPT-5.2. It is worth noting that these figures are self-reported and benchmarked against GPT-5.2 rather than the more recent GPT-5.3, so the actual incremental improvement over the latest model may be smaller.

The benchmark results across professional tasks are strong. GPT-5.4 scored 91% on BigLaw Bench for legal document analysis and took the top position on Mercor’s APEX-Agents benchmark, which evaluates agents on sustained professional tasks in investment banking, consulting, and corporate law. In a broader knowledge work evaluation across 44 occupations, the model matched or exceeded industry professionals in 83% of comparisons. These tasks ranged from generating sales presentations and accounting spreadsheets to creating manufacturing diagrams and urgent care schedules.

Safety evaluations also received attention. OpenAI introduced an open-source evaluation for chain-of-thought controllability, which tests whether models can deliberately obfuscate their reasoning to evade monitoring. The company found that GPT-5.4 Thinking’s ability to control its CoT is low, framing this as a positive signal that monitoring the model’s visible reasoning remains effective. Anthropic published related research earlier in 2026, which OpenAI references in its launch materials.

New Enterprise Integrations

Alongside the model release, OpenAI launched ChatGPT for Excel and Google Sheets in beta, embedding the model directly into spreadsheets for financial modeling and data analysis. New integrations with FactSet, MSCI, Third Bridge, and Moody’s allow teams to pull market data, company data, and internal data into a single workflow.

These moves put OpenAI in more direct competition with Anthropic, which launched similar financial services tools with Claude in mid-2025 and expanded them later that year. OpenAI’s CFO Sarah Friar told CNBC in January 2026 that enterprise customers make up about 40% of the company’s business, with an expectation to reach 50% by the end of the year. The Chat GPT-5.4 release and its enterprise-focused features clearly serve that strategy.

The Bigger Picture: Cost, Scale, and Alternatives

The pace of frontier model releases has reached a point where maintaining a durable lead is genuinely difficult. OpenAI teased its next model on the same afternoon Chat GPT-5.4 launched. That cadence, two major releases in under a week with the next already hinted at, suggests that staying visible in the news cycle is now part of the competitive playbook.

Each new generation of frontier models demands more compute, and the infrastructure required to serve sub-second responses to millions of users is not trivial. Companies like Microsoft, Google, Amazon, Meta, and Apple are collectively spending hundreds of billions of dollars on AI infrastructure. That growing concentration has prompted legitimate questions about long-term sustainability and access.

It is also part of the reason decentralized alternatives have been gaining attention. Bittensor, for instance, operates a peer-to-peer network where AI services are produced and distributed through specialized subnets. One of its most prominent subnets, Chutes (SN64), functions as a serverless AI inference platform that gives developers API access to a wide range of models.

Chutes claims to operate at roughly 85% lower cost than comparable cloud providers and has processed trillions of tokens since launch. It currently ranks as one of the leading inference providers on OpenRouter, alongside centralized players like OpenAI and Anthropic. Whether decentralized inference can match the speed and scale of frontier models like GPT-5.4 remains an open question, but the traction these projects are seeing suggests genuine demand for alternatives to the centralized approach.

Chat GPT-5.4 is a genuine step forward for OpenAI. The improvements in computer use, token efficiency, tool management, and hallucination reduction are all measurable and meaningful. Whether these advances are enough to sustain a lead in an increasingly crowded field is something only real-world adoption will reveal.

If this is your first time hearing about Bittensor, click here for an introduction.