Meta Glasses Can Help You Hear Better

Meta has released a new software update for its AI powered smart glasses. The update strengthens their role as everyday wearable tools. It focuses on clearer conversations and visual based music playback. As a result, the glasses become more useful in real world environments where noise and visual context affect daily interactions.

The new features are launching on Ray Ban Meta and Oakley Meta HSTN smart glasses. Initially, users in the United States and Canada will receive access. At the same time, Meta continues to position these devices as companions that support daily communication and awareness.

Clearer Conversations in Loud Spaces

One of the most important additions is conversation focus. This feature improves speech clarity when background noise interferes with understanding. Many people struggle in restaurants, offices, and public transport. This challenge often appears even without diagnosed hearing loss.

The glasses amplify the voice of the person directly in front of the wearer. They use AI driven audio processing and open ear speakers to deliver clearer sound. In addition, users can adjust amplification by swiping the right temple or changing settings on the device. This control allows quick adaptation to busy cafes, bars, or commuter trains.

Because of this, the feature supports people with mild hearing difficulty, early age related hearing decline, and auditory processing challenges. It also helps professionals who rely on clear speech throughout the day. Meta first introduced this feature at Meta Connect, and it now arrives as a tool ready for daily use.

Music That Responds to What You See

Alongside audio improvements, the update introduces a Spotify integration. This feature connects visual input directly to music playback. The glasses recognize what the wearer is looking at and select matching music.

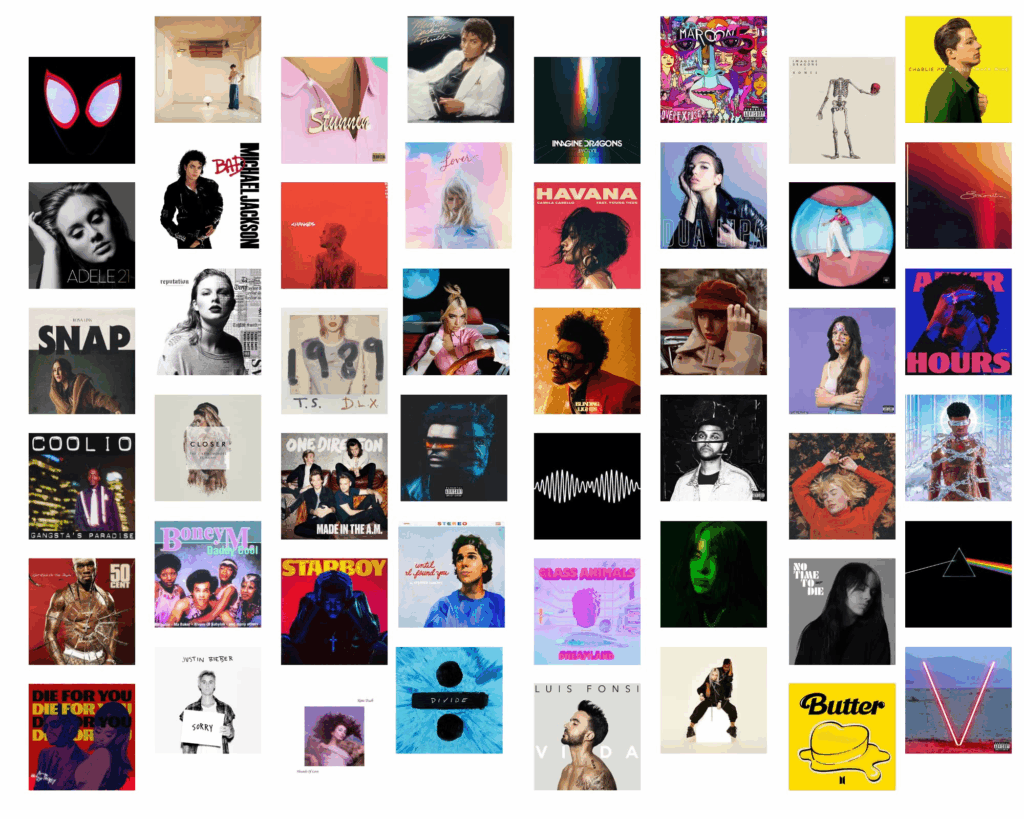

For example, looking at an album cover can start a song by that artist. Similarly, viewing seasonal decorations can trigger themed music. Through this feature, Meta shows how computer vision can link real world scenes with digital services in an intuitive way.

Smart Glasses as Hearing Support Tools

Across the industry, companies increasingly use wearables as hearing support tools. Meta’s approach follows a growing trend. Apple already offers Conversation Boost in AirPods, and newer Pro models include hearing aid level support.

As a result, consumer devices now provide situational hearing assistance. Smart glasses offer a discreet option for people who experience hearing challenges but prefer flexible support. This approach suits users who are not ready for clinical hearing aids or want help only in specific environments.

Where and When the Update Is Available

During the initial rollout, conversation focus remains limited to the United States and Canada. Meanwhile, the Spotify feature is available in English across Europe, Australia, India, the Middle East, and North America.

The update arrives as software version 21. First, it reaches users enrolled in Meta Early Access Program, which requires waitlist approval. Later, Meta plans a broader release after collecting early feedback.

A Practical Direction for AI Wearables

Overall, this update marks a clear step forward for Meta’s smart glasses. Improved speech clarity and visual driven actions increase daily usefulness. As AI wearables continue to evolve, smart glasses increasingly support how people hear, communicate, and interact with the world around them.