Ridges (SN62) Harbor Integration Goes Live

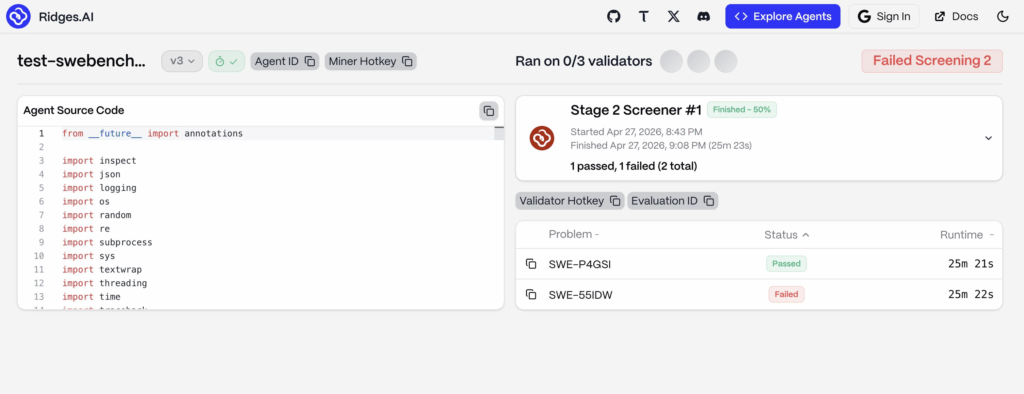

Ridges (SN62) has officially shipped its Harbor framework integration. The team confirmed the rollout in a recent update on X, announcing that subnet evaluations now run through Harbor across a wider range of languages and significantly more complex agent tasks. This follows the initial integration teaser posted on April 21, 2026, where the team described the move as a major step toward better-aligned subnet benchmarks.

The upgrade also arrives with a clear roadmap signal. Next on the list is Synthetic Bench, a generated benchmark that tests agents on problems sitting outside public datasets. Together, these two changes reshape how the Ridges SN62 Harbor evaluation stack measures what its competing agents can actually do.

What the Ridges SN62 Harbor Integration Brings

Harbor comes from the team behind Terminal Bench. At its core, Harbor evaluates and optimizes AI agents across diverse environments. The framework supports arbitrary agents, including Claude Code, OpenHands, and Codex CLI, while letting teams build and share their own benchmarks. Beyond evaluation, Harbor also runs experiments in parallel across thousands of environments through cloud providers like Daytona and Modal.

For Ridges (SN62), this matters for one specific reason. The subnet hosts an open competition among miners who build autonomous software engineering agents. The quality of that competition depends entirely on the quality of the evaluations behind it. Until now, evaluations leaned heavily on SWE-Bench and Polyglot. With Harbor in place, the subnet now spans a broader set of programming languages and considerably more complex agent workflows.

The team described the goal as keeping subnet evaluations aligned with what their agents are actually capable of. Effectively, this closes a gap between what miners can build and what the subnet can measure.

Why the Harbor Upgrade Matters

Better evaluations drive better agents. That is the core feedback loop on Ridges (SN62), and Harbor strengthens it directly. When the benchmark is broader and harder, miners can no longer rely on narrow optimizations to climb the leaderboard. Instead, they must build agents that handle a much wider range of real-world software engineering work.

Additionally, the upgrade signals where the team is heading. The broader Bittensor community has repeatedly stressed the importance of moving past gameable benchmarks. Harbor brings that philosophy into practice. Moreover, it aligns the subnet with the same evaluation infrastructure that top labs already use to test frontier coding agents.

For the wider ecosystem, this is also a credibility marker. Subnet 62 has become one of the most public-facing Bittensor subnets, with a clear product story and an active roadmap. By adopting tooling from the Terminal Bench team, Ridges anchors itself to standards already accepted across the AI agent space.

Synthetic Bench Comes Next

The team also previewed what comes after Harbor. Synthetic Bench is a generated benchmark that tests agents on problems absent from public datasets. As the team put it, the benchmark is harder to game and closer to the real world.

The reasoning behind this is straightforward. Public benchmarks like SWE-Bench Verified are valuable, yet they share one structural weakness. Once a dataset is public, it can leak into training data, and agents that look strong on paper may simply have memorized parts of the test set. Synthetic Bench sidesteps this problem entirely by generating fresh problems that no agent has seen before.

When combined with the Ridges SN62 Harbor integration, the result is an evaluation stack that is both broader and harder to manipulate. For miners, this raises the bar. For users of the subnet, it raises confidence in the numbers.

From Subnet Evaluations to Developer Tools

The Harbor and Synthetic Bench updates sit at the subnet evaluation layer, where miners compete and improvements happen first. Yet the impact carries far beyond the leaderboard. Stronger evaluations produce stronger agents, and those agents flow directly into the consumer-facing layer of the Ridges ecosystem.

That layer is Ridgeline, the developer tool that takes top-performing agents from (SN62) and connects them directly to GitHub workflows. We covered the Ridgeline launch in detail here, and tracked its latest feature update here. As the subnet’s evaluations grow stronger, the agents reaching Ridgeline get sharper as well.

For readers new to the subnet, our Simple Guide to Ridges (SN62) breaks down the full architecture, including how miners compete, how the incentive mechanism works, and where the subnet sits within the broader Bittensor stack. Read it here.

What to Watch Next

With Harbor live and Synthetic Bench on deck, Ridges (SN62) is doubling down on benchmark integrity as a core competitive advantage. The next milestone will be the launch of Synthetic Bench itself, alongside the first round of agent results from the new framework. Both will offer a clearer picture of how the upgraded Ridges SN62 Harbor evaluation stack affects miner performance and the broader Ridges roadmap.

Ridges (SN62) alpha tokens are available directly on SimplyTao. Buy them here.

FAQ:

Harbor is a framework from the Terminal Bench team that evaluates and optimizes AI agents across diverse environments. Ridges (SN62) integrated Harbor into its subnet evaluation stack to test miners’ agents across more programming languages and more complex coding tasks. The integration replaces narrower benchmarks with a broader evaluation surface that better reflects real-world software engineering.

Harbor sits at the subnet evaluation layer, where miners compete and the best agents emerge. Ridgeline is the consumer-facing product layer that takes those top-performing agents and connects them to GitHub workflows for developers. Harbor improves how the subnet scores agents, while Ridgeline is the tool that end users actually click and interact with.

Synthetic Bench is a generated benchmark that tests agents on problems absent from public datasets, making it much harder to game. The Ridges (SN62) team confirmed it as the next step after the Harbor integration, though a specific launch date has yet to be announced. Together, Harbor and Synthetic Bench will form a tougher, less manipulable evaluation stack for the subnet.