Targon (SN4) and Intel TDX: Confidential Compute on Bittensor

Manifold Labs, the team behind Targon (SN4) on the Bittensor network, has released a joint whitepaper with Intel. The paper, titled “Decentralized Compute on Untrusted Hardware Using Intel TDX and Encrypted CVMs,” introduces a confidential computing architecture built for decentralized AI infrastructure. It was published on March 23, 2026, with a simultaneous release on Intel’s community blog and Manifold’s releases page.

The Problem: Trusted Workloads on Untrusted Machines

Decentralized compute networks depend on independent hardware providers who own and operate the physical machines. This creates a fundamental security gap. Traditional encryption protects data at rest and in transit, but it does not protect data during active computation. When a workload runs on someone else’s hardware, the operator can potentially inspect memory, tamper with execution, or extract sensitive information like model weights and training data.

As Targon’s team put it on X:

The whitepaper directly addresses this gap with a layered architecture that combines Intel Trust Domain Extensions (TDX), Intel Trust Authority (ITA), and NVIDIA Confidential Computing.

What Intel TDX Brings to the Table

Intel TDX is a hardware-level technology built into Intel Xeon processors (5th and 6th Gen “Emerald Rapids” and “Granite Rapids”). It creates cryptographically isolated virtual machines called Trust Domains (TDs). Inside a Trust Domain, CPU state and memory are encrypted with hardware-managed keys that are inaccessible to the host operating system, hypervisor, or machine operator. Even someone with full physical control of the server cannot read what is happening inside the VM.

The whitepaper pairs Intel TDX on the CPU side with NVIDIA Confidential Computing on GPUs (Hopper H100/H200 and Blackwell B200 series). GPUs operate in Protected PCIe (PPCIe) mode, which encrypts communication between CPU and GPU. Together, these technologies provide end-to-end protection for AI workloads across the entire hardware stack.

How the Architecture Works

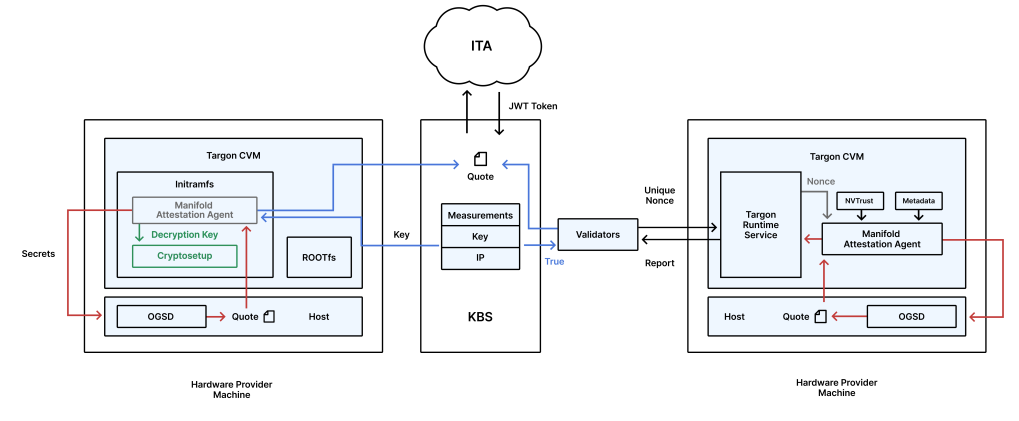

When a hardware provider joins the network, Targon’s Image Gateway generates a unique, encrypted Confidential Virtual Machine (CVM) based on a hardened Ubuntu 24.04 image. Each CVM receives a randomly generated per-VM disk encryption key stored in Intel’s Key Broker Service (KBS). The key is only released after the VM passes remote attestation through Intel Trust Authority.

At boot, a Manifold Attestation Agent collects cryptographic measurements of the entire boot chain, including kernel, firmware, and initramfs. These measurements are verified against expected values. If anything has been tampered with, the key stays locked and the VM simply does not launch.

After boot, the system re-verifies node integrity every 72 minutes (roughly one Bittensor block interval). Each attestation round uses a challenge-response nonce issued by validators, which prevents replay attacks. The process also covers GPU state through NVIDIA’s nvTrust SDK, binding CPU and GPU verification into a single cryptographic proof. Nodes that fail any check are immediately removed from the scheduling pool.

The whitepaper also details anti-cloning protections. Upon first successful attestation, the KBS permanently binds the CVM to the provider’s IP address. Providers cannot copy encrypted disks to other machines, migrate VMs, or replay attestation from different locations. If a provider’s IP changes, the existing CVM becomes permanently inaccessible.

Why It Matters for Bittensor

This appears to be the first time a Bittensor subnet has published a formal security architecture alongside a major semiconductor manufacturer. Confidential computing is widely considered a prerequisite for enterprise adoption of decentralized AI. Organizations in healthcare, finance, and defense need hardware-level guarantees before moving sensitive workloads off centralized cloud providers.

The whitepaper lays out exactly how those guarantees can work in a permissionless, decentralized environment. It also enables secure monetization of proprietary AI models. Developers can train, fine-tune, and serve models privately, without risking intellectual property leakage. This could attract a new class of model creators to Bittensor who previously avoided decentralized infrastructure due to security concerns.

Targon (SN4) is already one of the highest-revenue subnets in the Bittensor ecosystem, backed by over 1,500 H200 GPUs and a $10.5M Series A led by OSS Capital. If you want to learn more about how the subnet works and what makes it stand out, check out our detailed breakdown here.

What Comes Next

The next step for Targon is implementing collateralized compute markets. Operators will post collateral to commit to multi-month compute contracts for various hardware types (B200, H100, L40s), paired with uptime and performance guarantees. This would create durable supply commitments in a trustless manner and push pricing even lower for buyers.

The full whitepaper is available on Manifold’s releases page and on Intel’s community blog.

FAQ:

The whitepaper introduces a confidential computing architecture that allows AI workloads to run securely on untrusted hardware. It combines Intel TDX for CPU-level encryption with NVIDIA Confidential Computing for GPU protection, enabling end-to-end data security across the entire hardware stack on the Bittensor network.

Intel TDX creates hardware-isolated virtual machines called Trust Domains. Inside a Trust Domain, memory and CPU state are encrypted with keys that are inaccessible to the machine operator. Even someone with full physical control of the server cannot inspect or tamper with a running workload. Targon adds continuous re-attestation every 72 minutes to verify that nodes remain in a trusted state.

Confidential computing is a key requirement for enterprise adoption of decentralized AI. The whitepaper provides a concrete, hardware-backed security framework that allows companies handling sensitive data to use decentralized infrastructure without trusting individual hardware providers. It also enables developers to monetize proprietary AI models on Bittensor without risking intellectual property leakage.