Your Simple Guide to BitMind (SN34)

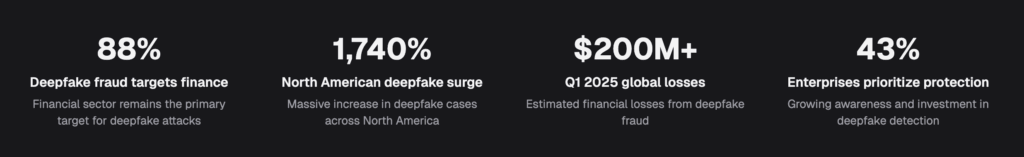

The global deepfake market reached an estimated $5.5 billion in 2023, according to Deloitte and Bloomberg. It continues to expand rapidly as synthetic media becomes easier and cheaper to produce. In addition, cumulative losses from deepfake-related fraud surpassed $1 billion in 2025 alone, according to a Surfshark analysis. High-profile cases already demonstrate the scale of the threat. In early 2024, a finance worker at the engineering firm Arup authorized $25 million in transfers after joining a video call. Every participant on that call turned out to be a deepfake. With generative AI tools improving at an unprecedented pace, traditional detection methods struggle to keep up. BitMind (SN34) addresses this challenge by building an advanced deepfake detection system on Bittensor. The system runs on continuous adversarial competition between AI generators and AI detectors.

What is BitMind (SN34)?

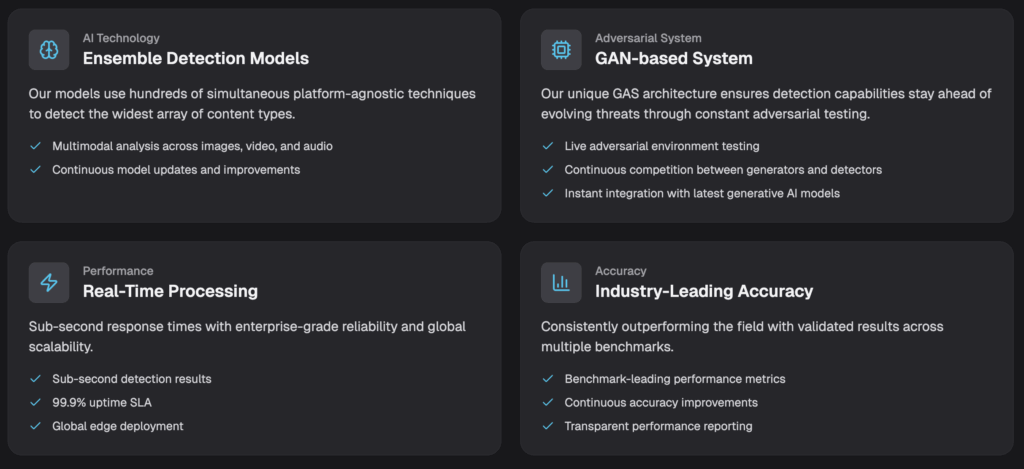

BitMind (SN34) is a Bittensor subnet that produces state-of-the-art AI-generated content detection across images, video, and audio. The project operates as Subnet 34 on the Bittensor mainnet. Officially, the team calls its mechanism GAS, which stands for Generative Adversarial Subnet. The name reflects the core design philosophy. It describes a system inspired by Generative Adversarial Networks (GANs) where detectors and generators compete in a continuous loop. As a result, each side pushes the other to improve.

In practical terms, BitMind (SN34) functions like a decentralized, ever-evolving deepfake detection engine. One group of miners builds detection models. These models classify media as real, fully synthetic, or partially modified by AI. Meanwhile, a second group of miners generates synthetic content designed to fool those detectors. Validators act as referees, scoring both sides and distributing rewards based on performance. Consequently, the detection models constantly adapt to the latest generative techniques.

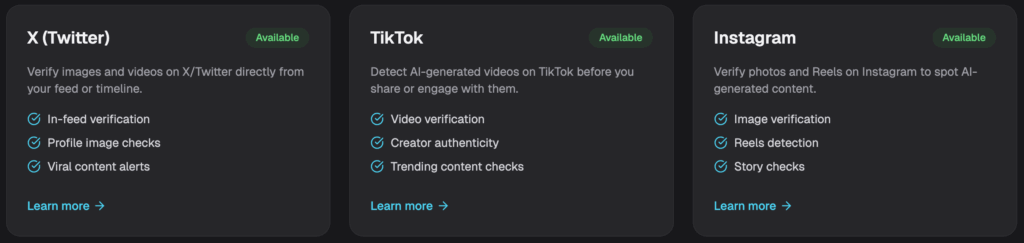

The subnet also produces outputs with direct real-world applications. Detection models flow into consumer and enterprise products on the BitMind platform. These products include a Chrome browser extension, a mobile app for iOS and Android, an enterprise API, and web-based detection tools. According to the company, the platform serves over 100,000 monthly active users across more than 100 countries. It processes millions of detection requests per week. The system achieves 95% accuracy on real-world content with sub-second response times. On top of that, the company holds SOC 2 compliance with zero data retention policies.

What makes BitMind (SN34) particularly distinctive is how both sides of the competition grow simultaneously. Generators produce harder-to-detect fakes, so detectors become stronger. In turn, detectors improve, and generators must innovate further. This dynamic cycle means the network can adapt to new generative techniques in days or weeks. By comparison, centralized detection systems typically rely on periodic internal updates.

How BitMind works?

BitMind (SN34) runs two parallel competition tracks. Together, they create the adversarial dynamic at the core of the subnet.

The first track involves discriminative miners. These participants submit detection models for image, video, and audio content. Each modality receives independent scoring. Importantly, miners submit their models to the network rather than hosting them on personal hardware. Validators then evaluate these models on cloud infrastructure. As a result, the capital required to participate drops significantly. The scoring metric is called sn34_score. It represents a geometric mean of the Matthews Correlation Coefficient (MCC) and the Brier score. This combination measures both classification accuracy and calibration quality. In other words, models must produce well-calibrated confidence scores.

The second track involves generative miners. These participants run servers that produce synthetic media on demand. Validators send them prompts, and the miners respond with generated content. Generative miners earn a base reward for valid content. On top of that, they receive a multiplier based on how effectively their outputs fool the discriminative models. To prevent gaming, the system incorporates C2PA (Coalition for Content Provenance and Authenticity) standards. Validators verify cryptographic metadata in the generated media. This confirms that the content was produced through legitimate generative pipelines.

Validators orchestrate the entire process. They send prompts to generative miners and validate the returned synthetic media. At the same time, they continuously benchmark discriminative models against a diverse mix of data sources. These sources include content from generative miners, real-world data from the internet, and media generated locally on the validator itself. The evaluation datasets refresh weekly with fresh data from GAS-Station. This is a publicly available collection on Hugging Face that currently contains over 600,000 generated images and over 200,000 generated videos. Static benchmark datasets also supplement this rotating pool.

A recent addition involves segmentation challenges. Beyond simple classification (real vs. fake), miners can now operate as segmenters. These miners identify AI-generated regions within images through pixel-level analysis. This capability targets semi-synthetic media, where only portions of an image have been modified by AI. Examples include face swaps or inpainting. The miner produces a confidence mask where each pixel value represents the probability that the corresponding area was AI-generated.

Furthermore, the subnet enforces a strict one model per modality per hotkey rule for discriminative miners. All submitted models must use the Safetensors format. Together, cloud-based evaluation, multi-track competition, weekly data refreshes, and strict anti-gaming measures create a system where only genuinely innovative detection approaches earn meaningful rewards.

Who is behind it?

Ken Miyachi co-founded BitMind and serves as CEO. Before starting BitMind, Miyachi worked as a Senior Tech Lead at the NEAR Foundation. He also previously founded LedgerSafe, a blockchain security company. Earlier in his career, he worked as an engineer at Amazon, building recommendation systems. His background spans blockchain infrastructure, security engineering, and distributed systems.

Dylan Uys co-founded BitMind alongside Miyachi and leads the company as Head of AI. Previously, Uys worked as a Machine Learning Engineer at ViaSat and Poshmark. There, he focused on computer vision and fraud detection systems. His experience in detecting fraudulent visual content directly informs the technical approach behind BitMind’s detection architecture.

The broader team includes Canh Trinh as Head of Engineering. Trinh previously served as an Engineering Lead at Axelar and held positions at JP Morgan and Deutsche Bank. In addition, Alexey Zenin serves as Blockchain Engineer and is a PhD Candidate at Texas A&M specializing in distributed systems. The team also includes specialists in AI engineering, full-stack development, backend systems, and digital marketing.

BitMind was founded in 2024 and is registered as BitMind Holdings Limited in the Cayman Islands. Operations run under BitMind Labs Inc. based in Dover, Delaware. The company raised $750,000 in funding led by Canonical, with participation from the NEAR Foundation.

Why it is valuable?

BitMind (SN34) addresses a structural weakness in AI content detection. Most existing tools rely on static models trained against known generative techniques. However, as new AI generators emerge and improve, these models quickly become outdated. The time between a new generative breakthrough and an effective detector often spans months. As a consequence, bad actors exploit this window for fraud, disinformation, and identity theft.

The adversarial architecture of BitMind (SN34) closes that gap. Generative miners constantly push the boundaries of what synthetic media looks like. In response, discriminative miners face perpetual pressure to adapt. The weekly dataset refresh from GAS-Station ensures that detection models train against the latest outputs. According to the team’s published research paper, this approach has achieved accuracy as high as 98.53% in controlled evaluations. Real-world detection capabilities reach up to 91.95%.

The decentralized structure also provides a second layer of value. Centralized detection solutions carry inherent risks around bias, access control, and transparency. For instance, a corporation might optimize detection for specific content types while ignoring others. Similarly, a government-operated system might face pressure to classify certain content favorably. BitMind (SN34) avoids these risks because its models emerge from open, permissionless competition. Anyone can submit a better model. The network rewards accuracy regardless of who produced it.

The commercial product suite then transforms raw detection capability into accessible tools. The Chrome extension lets users hover over any image on the web. It then delivers an instant verdict with a confidence score. The mobile app provides the same functionality on phones. Meanwhile, the enterprise API allows organizations to integrate detection into their own workflows. It includes custom SLAs, SOC 2 compliance, and zero data retention. For sectors like financial services, where AI-generated fraud losses are projected to reach $40 billion in the United States by 2027, this kind of detection represents critical infrastructure.

In addition, the open-source nature of the detection models creates broader ecosystem value. Winning models from the GAS competition become publicly available. As a result, the entire research community benefits from improvements driven by the incentive mechanism. This stands in contrast to proprietary systems where advances stay locked behind corporate walls.

The future of BitMind

The most significant recent advancement is segmentation mining. This feature moves detection beyond binary classification into pixel-level identification of AI-modified regions within images. It directly addresses the growing threat of semi-synthetic media. In these cases, bad actors manipulate only specific portions of real images to create misleading content. As inpainting and face-swapping tools become more accessible, this granular detection approach becomes increasingly critical.

On the product side, BitMind has launched a native mobile app on both the App Store and Google Play under the name “BitMind: AI Detector & Creator.” The app allows users to detect AI-generated content instantly. They can also share results from any other app on their phone. Combined with the continuously improving Chrome extension, the product suite makes subnet-powered detection available across the most common platforms.

The broader vision positions BitMind as a full-stack AI content verification infrastructure provider. Beyond consumer tools, the team builds enterprise capabilities. These include custom implementation pipelines, dedicated support tiers, and compliance certifications for regulated industries. The company’s published roadmap for 2025 and 2026 focuses on scaling applications and ensuring enterprise-grade data privacy. At the same time, the team continues to advance the adversarial competition that drives the underlying detection technology. With generative AI evolving faster than ever and fraud risks growing in parallel, BitMind (SN34) stands as one of the most practically relevant subnets in the Bittensor ecosystem.

Sources:

https://github.com/BitMind-AI/bitmind-subnet

https://bitmind.ai