Claude Cowork: Working With an Autonomous AI Collaborator

Claude Cowork introduces a practical way of working with autonomous AI inside everyday workflows. From the first interaction, the system operates with continuity and intent, allowing users to delegate real objectives rather than manage isolated prompts. As a result, collaboration with AI begins to resemble task delegation instead of conversational control.

This shift matters because agentic AI is no longer an abstract concept. In practice, Claude Cowork shows how autonomy can function inside a personal computing environment, where files, context, and execution live close to the user.

How the Collaboration Model Works

At its core, this feature allows users to define an objective and step back while the system carries it forward. Instead of reacting to each message independently, the agent maintains context and progresses toward completion across multiple steps. Consequently, work unfolds over time rather than through fragmented exchanges.

This model changes how responsibility flows. The user sets intent and evaluates outcomes, while the system manages execution. Therefore, success depends more on clarity of goals than on prompt engineering.

macOS as the Execution Environment

At the moment, the feature is available exclusively on macOS through the Claude desktop application. This decision reflects a focus on deep operating system integration rather than broad platform coverage. By anchoring execution locally, the system can interact directly with files and folders in a controlled environment.

Importantly, this constraint shapes the experience. Work feels grounded, contained, and predictable. Files remain local, workflows stay familiar, and the AI operates alongside the user rather than as a distant service.

Task Persistence and Asynchronous Work

One of the most defining aspects of this approach is task persistence. Instructions function as objectives, not conversational turns. After receiving direction, the agent continues working asynchronously, managing dependencies and preserving context throughout the process.

As a result, users avoid micromanagement. They intervene only when needed, which reduces cognitive overhead and supports longer, more complex workflows. Over time, this creates a rhythm closer to collaboration than interaction.

A Shift Toward Agentic AI Systems

This approach reflects a broader movement toward agentic AI, where systems act with initiative inside clearly defined boundaries. Rather than waiting for the next input, the agent pursues a goal and adapts actions as needed.

Because of this, evaluation criteria change. Reliability, follow-through, and outcome quality matter more than conversational fluency. In other words, the system earns trust by executing well, not by sounding intelligent.

Position Within the Claude Ecosystem

Within Anthropic’s broader ecosystem, this feature represents a move toward persistent execution models. Long-context reasoning and project-based workflows already exist, but autonomous follow-through adds a new layer of operational depth.

At the same time, the macOS-only release signals caution. Anthropic appears to prioritize safety, predictability, and alignment before expanding reach. This sequencing suggests a deliberate strategy rather than a limitation.

What This Signals for Knowledge Work

This model points toward a future where working with AI means delegating objectives rather than crafting prompts. By combining autonomy, context retention, and local integration, the system demonstrates how agentic AI can fit naturally into daily work.

Claude Cowork introduces a model in which AI becomes a persistent participant in the workflow. It reads files, processes information across multiple steps, and delivers outcomes with continuity and intent. As agentic AI moves from theory into practice, Claude Cowork provides a concrete example of how autonomy can be integrated into everyday knowledge work.

What Claude Cowork Is in Practice

Claude Cowork is a feature developed by Anthropic that enables Claude to execute delegated tasks autonomously within a defined working environment. Instead of interacting through isolated prompts, users describe an objective and allow Claude to carry it forward. The system maintains context, reasons through intermediate steps, and works toward a result without constant supervision.

At this stage, Claude Cowork is available only on macOS, delivered through the Claude desktop application. This limitation reflects a deliberate design choice. Tight integration with the local operating system allows Claude Cowork to interact directly with the file system and manage tasks within a controlled execution environment. Rather than aiming for immediate cross-platform reach, Anthropic appears focused on refining how deeply an AI agent can be embedded into a personal computing workflow.

How Claude Cowork Operates Inside Workflows

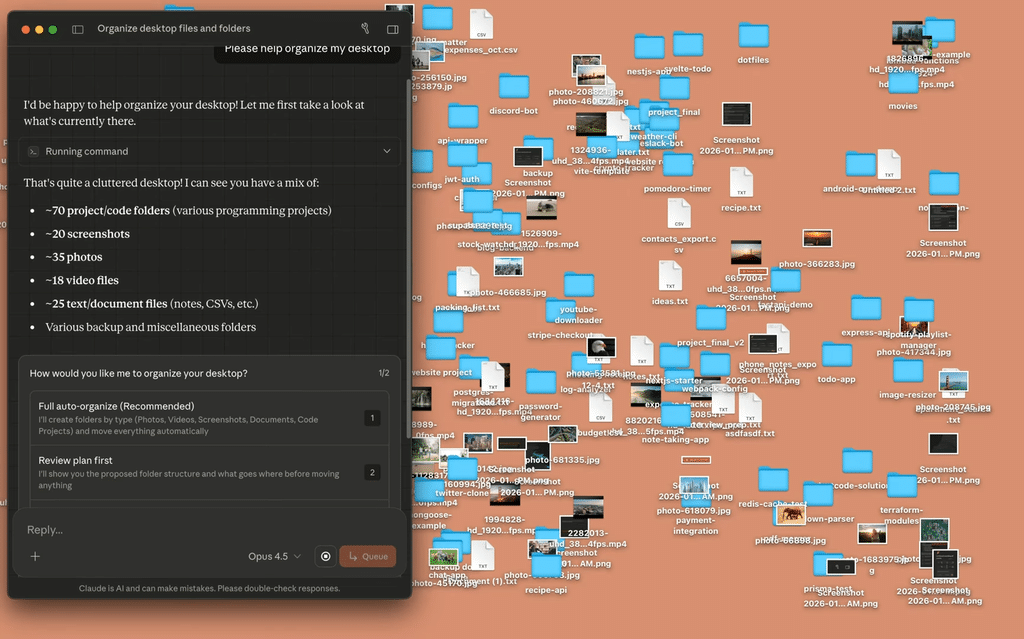

Claude Cowork operates within user-approved workspaces, typically folders containing documents, notes, screenshots, or project materials. Once access is granted, Claude can read, interpret, and manipulate content directly. This enables synthesis, restructuring, and generation of new materials grounded in existing assets.

A defining aspect of this experience is task persistence. Instructions are treated as objectives rather than conversational turns. Claude Cowork works asynchronously, managing dependencies between steps and preserving context throughout execution. The user does not need to micromanage each action. Instead, they focus on framing intent and evaluating outcomes.

Because Claude Cowork runs as a desktop experience on macOS, the interaction feels grounded and contained. Files remain local. Work unfolds within familiar structures. This reinforces the sense that Claude is not an external service being queried, but a collaborator operating alongside the user.

Claude Cowork and the Shift Toward Agentic AI

Claude Cowork reflects a broader transition toward agentic AI systems that act with initiative inside clearly defined constraints. Rather than responding passively, these systems are expected to pursue goals, manage state, and make local decisions aligned with user intent.

This model redistributes cognitive load. Claude Cowork absorbs operational complexity and repetitive coordination, allowing humans to focus on judgment, synthesis, and strategic direction. Success depends less on prompt craftsmanship and more on how clearly objectives are articulated.

In this sense, Claude Cowork feels like an early signal of a future where AI systems are evaluated by how reliably they execute.

Claude Cowork Within the Claude Ecosystem

Within the broader Claude ecosystem, Claude Cowork represents a move toward persistent execution models. It builds on long-context reasoning and project-based workflows by adding continuity and autonomous follow-through.

This progression suggests a clear strategic direction. Claude is evolving from a conversational model into an operational system capable of participating in work over time. Claude Cowork is not an isolated experiment. It is part of a broader effort to understand how AI can collaborate without sacrificing alignment, safety, or predictability.

The macOS-only availability reinforces this approach. Claude Cowork is being shaped carefully within a constrained environment before broader expansion. The emphasis is on trust and reliability rather than immediate scale.

The Direction of Autonomous Work

Claude Cowork represents a meaningful step toward AI systems that function as collaborators rather than tools. By combining autonomy, context retention, and deep workflow integration, it demonstrates how agentic AI can become part of everyday work without feeling experimental or abstract.

As these systems mature, the distinction between centralized solutions like Claude Cowork and decentralized intelligence networks will become increasingly important. Each embodies a different philosophy of control, coordination, and trust.

Could a Cowork-Like Agent Exist as a Subnet on Bittensor?

Claude Cowork is intentionally centralized: users grant Claude access to a specific local folder inside the macOS app, and the system performs actions within that bounded environment as a “research preview” feature for Claude Max subscribers.

A Bittensor-style approach would start from a different premise. In Bittensor, subnets are incentive systems where miners produce a digital commodity and validators score output quality, with rewards driven by that evaluation. A Cowork-like subnet would therefore need to define: what commodity is being produced and how validators can reliably judge whether that work was done well.

Cowork’s value comes from acting on real user context under user-granted permissions. In a decentralized subnet, validators usually need access to the same inputs to score outputs – yet sharing personal files or proprietary documents for validation is often unacceptable. Even if you solve privacy, scoring “did the agent actually do the right thing” is non-trivial when tasks are open-ended and success criteria can be subjective.

On top of that, the more you allow an agent to execute actions, the more you must defend against prompt injection and ambiguous instructions – risks that Anthropic explicitly flags for Cowork-style agents operating on local files.

A subnet-based implementation could be beneficial if the goal is market-driven quality, resilience and potentially different cost dynamics.