Covenant-72B: The Largest Decentralized LLM Training Run

Covenant-72B is a 72-billion-parameter language model pre-trained by the Templar team on Bittensor Subnet 3, entirely over commodity internet, with no centralized data center. The model scored 67.1 on MMLU (zero-shot), exceeding centralized baselines like LLaMA-2-70B and LLM360 K2 under the same evaluation conditions. It is the largest collaboratively trained LLM to use fully permissionless participation, with over 70 unique peers contributing compute throughout the run. The team released all weights and checkpoints under an Apache License.

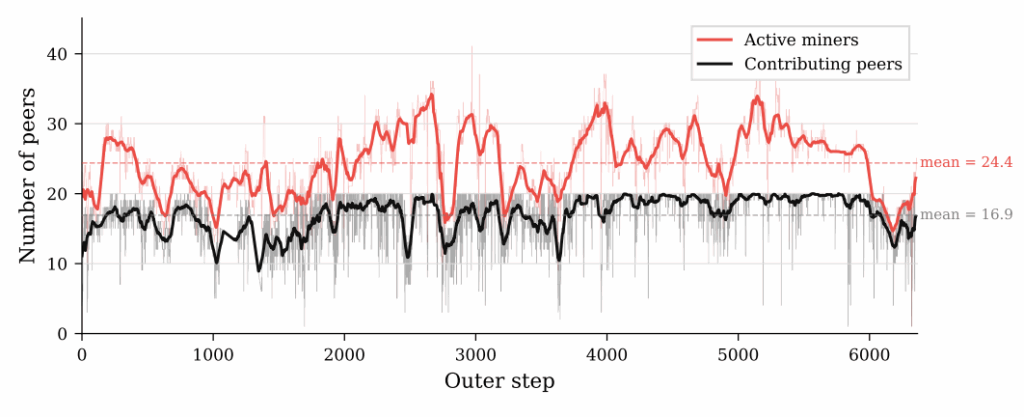

The accompanying research paper appeared on arXiv on March 9, 2026, and the team published all model weights on Hugging Face under an open license. In contrast to earlier decentralized training projects, Covenant-72B required no whitelist and no approval process. Anyone with the minimum hardware requirement of 8 x NVIDIA B200 GPUs (or equivalent) could join or leave the training run at any time. According to the paper, more than 70 unique peers contributed compute across different geographic locations and hardware setups over the course of training.

Why Covenant-72B Matters for Decentralized AI Training

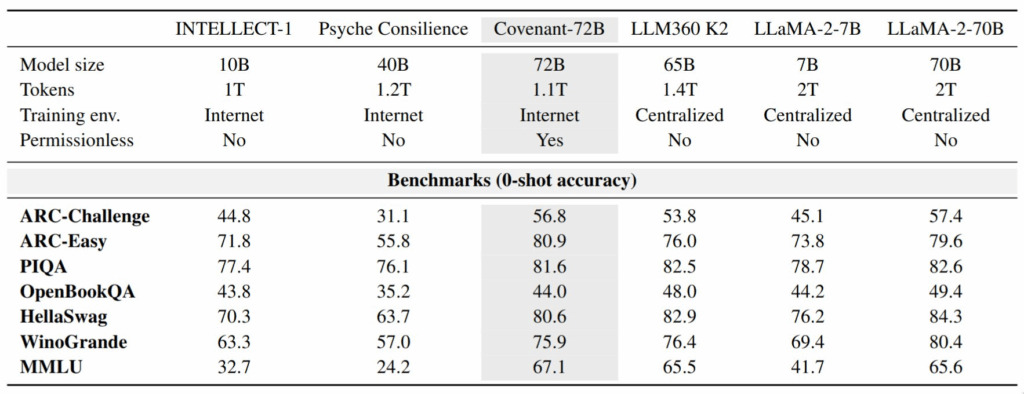

Earlier decentralized training efforts demonstrated that models can be trained across distributed nodes, but they generally remained limited in scale or operated with restricted participation. INTELLECT-1 by Prime Intellect, for instance, trained a 10B-parameter model across multiple continents using up to 112 H100 GPUs. More recently, Psyche Consilience by Nous Research launched a 40B-parameter distributed run on its testnet. Both projects relied on whitelisted participants to control who could contribute.

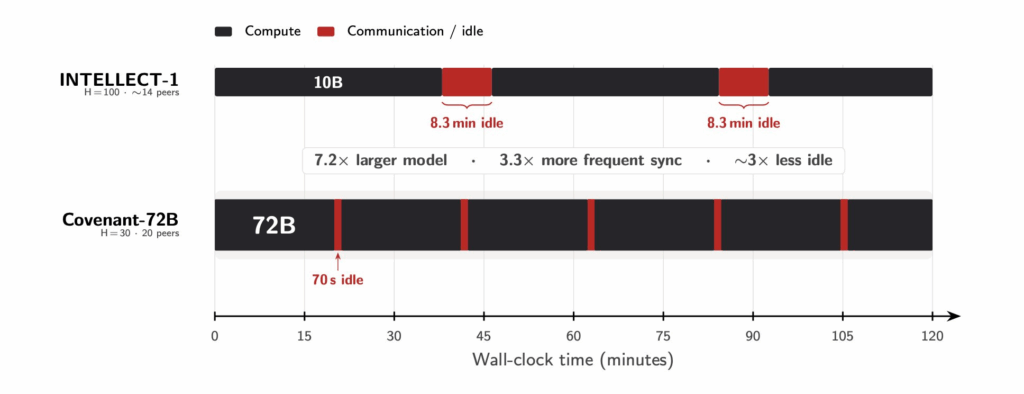

Covenant-72B differs on two fronts. First, the model is significantly larger at 72 billion parameters, making it 7.2 times the size of INTELLECT-1. Second, the training operated as a fully open, permissionless system where a blockchain-native validation mechanism handled trust and quality control continuously. As a result, Covenant-72B is the first decentralized LLM pre-training run to combine this scale with genuinely open participation.

The Bandwidth Problem and How SparseLoCo Solved It

The central technical challenge at 72B scale is bandwidth. Inside a traditional centralized cluster, GPU interconnects deliver speeds ranging from 400 Gb/s (InfiniBand) between servers to 3.6 TB/s (NVLink) within a single server. The Covenant-72B run, by contrast, assumed typical commodity internet connections with approximately 500 Mb/s download and 110 Mb/s upload per peer. Standard full-gradient synchronization at every training step is simply not feasible under those conditions.

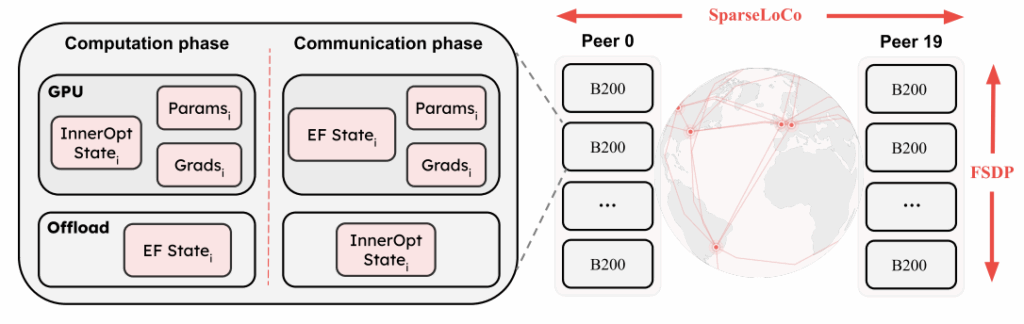

The Templar research team addressed this with an optimizer called SparseLoCo. Rather than synchronizing gradients at every step, each peer runs 30 local optimizer steps independently. It then compresses its pseudo-gradient using a combination of top-k sparsification, 2-bit quantization, and error feedback.

This approach compresses the communication payload drastically. At the same time, an error-feedback buffer accumulates the information not transmitted in the current round, preserving training quality across cycles.

According to the paper, this produces a compression factor exceeding 146 times relative to dense gradient communication. Despite training a model 7.2 times larger than INTELLECT-1, the per-round communication overhead for Covenant-72B was approximately 70 seconds. For comparison, the Covenant-72B paper estimates INTELLECT-1’s equivalent overhead at 8.3 minutes. Compute utilization for the Covenant-72B run reached 94.5%, compared to approximately 82% reported for INTELLECT-1 under the Covenant paper’s methodology.

Solving the Trust Problem Without a Whitelist

Permissionless participation introduces a fundamental trust problem. In a system where anyone can contribute, free-riders may copy the work of others. Lazy peers might submit random noise instead of properly computed gradients. Moreover, malicious actors could attempt adversarial model poisoning. In whitelisted systems, organizers vet participants once before joining. The Covenant-72B system, by contrast, needed to validate every contribution automatically, every single round.

The mechanism handling this is called Gauntlet, a blockchain-native validator that scores submitted updates and decides which contributions deserve inclusion in the global model update. Gauntlet evaluates peers across several dimensions.

First, it checks loss on assigned versus unassigned data to catch free-riders who simply copy gradients. The system also runs liveness and model synchronization checks to confirm that each peer is actually training. On top of that, OpenSkill ranking stabilizes noisy per-round scores over time. Finally, magnitude normalization prevents any single peer from disproportionately influencing the model update.

The Gauntlet mechanism runs on the Bittensor blockchain, which provides the economic infrastructure for rewarding honest participants and penalizing dishonest behavior. Notably, this represents one of the first cases where blockchain-native validation supports LLM pre-training at scale.

Covenant-72B Benchmark Results Compared to Centralized Models

The researchers evaluated the pre-trained Covenant-72B base model against centralized baselines trained on comparable compute budgets. All results reported below are zero-shot accuracy-normalized, as specified in the paper. This is an important distinction, because widely cited benchmark scores for models like LLaMA-2-70B often use 5-shot evaluation, which typically produces higher numbers.

The fact that a fully decentralized, permissionless training run produced a model that exceeds these centralized baselines on MMLU under identical evaluation conditions is a notable technical result.

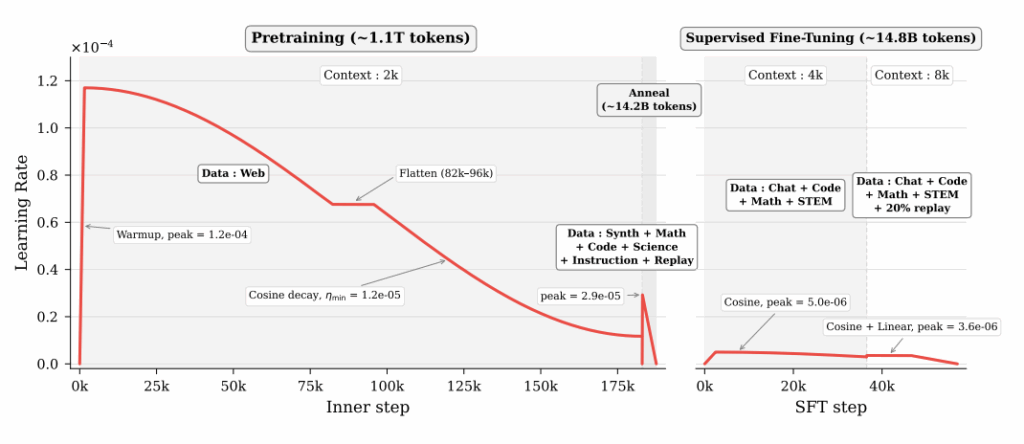

The team also produced a post-trained variant called Covenant-72B-Chat. They created it through a two-stage supervised fine-tuning (SFT) pipeline that extended the context length from 2,048 to 8,192 tokens. The fine-tuning stage used approximately 14.8 billion tokens. According to the paper, the chat model showed particularly strong performance on IFEval and MATH benchmarks relative to comparable centralized chat models. These results suggest improved instruction-following and mathematical reasoning capabilities after post-training.

Training Setup and Technical Details

Covenant-72B follows a LLaMA-style architecture, and the team trained it on approximately 1.1 trillion tokens. Each participating peer operated with a minimum of 8 x NVIDIA B200 GPUs (or equivalent), using dynamic Fully Sharded Data Parallel (FSDP) to distribute model parameters, gradients, and optimizer states across local GPUs within each node.

In practice, the training alternated between a computation phase and a communication phase. During computation, peers ran their local optimizer steps independently. During communication, the system aggregated compressed pseudo-gradients across all active peers.

Specifically, the SparseLoCo optimizer managed this cycle, while the error-feedback buffer ensured that information lost to compression in one round carried forward to the next. As a result, the training process maintained high throughput even over low-bandwidth connections.

Participation remained near the network capacity throughout the training run. The team released the model weights, intermediate checkpoints, and post-training checkpoints under an Apache License, making everything fully open-source. All checkpoints and the final Covenant-72B model are publicly accessible on Hugging Face.

Covenant AI and the Broader Bittensor Ecosystem

The team behind Covenant-72B originally operated under the name Templar and has since expanded into Covenant AI, a broader organization building three interconnected platforms on the Bittensor network. Templar (Subnet 3) handles decentralized pre-training. Basilica (Subnet 39) focuses on decentralized compute supply. Grail (Subnet 81) targets reinforcement learning-based post-training.

The trajectory from Templar to Covenant-72B has been rapid. The team started with smaller model runs in the 1B to 8B parameter range to harden infrastructure and refine the reward mechanism. The SparseLoCo optimizer and Gauntlet validator both emerged from that iterative process. The NeurIPS 2025 Optimization Workshop accepted two papers underpinning this work, which provided early academic visibility for the approach. The research involved contributions from researchers affiliated with Mila, one of the leading AI research institutes globally.

What Covenant-72B Means for the Future of AI Training

The significance of Covenant-72B is not that it outperforms the best centralized models. It does not. Current frontier models are trained with orders of magnitude more compute. According to Epoch AI research from late 2025, the largest decentralized training runs at that time operated at roughly 1,000 times less compute than frontier models. While Covenant-72B is substantially larger than those earlier runs, the gap to the frontier remains significant.

What the project does demonstrate, however, is that the coordination problem in decentralized training can be solved at meaningful scale. The Templar (SN3) team trained a 72B model with over 70 permissionless peers across commodity internet connections, while maintaining 94.5% compute utilization and producing results competitive with centralized baselines. That represents a significant step forward for the field.

As the team framed it in their announcement, the constraint was never physics. It was always coordination. Covenant-72B suggests that coordination at this scale, with trustless validation and aggressive communication compression, is now achievable.

Resources

The full research paper is available on arXiv.

The model weights can be accessed on Hugging Face.

A live chat demo powered by the Covenant-72B-Chat model is available at tplr.ai/chat.

Frequently Asked Questions

Covenant-72B is a 72-billion-parameter large language model pre-trained by the Templar team on Bittensor Subnet 3. The team trained it entirely over commodity internet connections, with no centralized data center, using fully permissionless participation from over 70 global peers. All weights and checkpoints are released under an Apache License.

SparseLoCo is a communication-efficient optimizer that reduces bandwidth requirements for distributed training. Each peer runs 30 local optimizer steps, then compresses its pseudo-gradient using top-k sparsification, 2-bit quantization, and error feedback. This achieves over 146 times compression relative to dense gradient communication.

The base model scored 67.1 on MMLU (zero-shot), exceeding centralized baselines LLaMA-2-70B (65.6) and LLM360 K2 (65.5) under the same evaluation methodology. The post-trained Covenant-72B-Chat variant showed strong results on IFEval and MATH benchmarks.

Covenant AI, formerly known as Templar, built the model. The team operates on the Bittensor blockchain and is led by Samuel Dare, with research contributions from Joel Lidin (correspondence author) and collaborators affiliated with Mila.