Your Simple Guide to Affine (SN120)

Reinforcement learning is the technique behind many of the most impressive breakthroughs in AI, from game-playing systems to reasoning models. Yet applying reinforcement learning at scale remains extraordinarily expensive and concentrated in the hands of a few large corporations. Affine (SN120) changes this by creating a decentralized, incentivized environment on Bittensor where anyone can contribute to improving AI reasoning capabilities and earn rewards for doing so.

What is Affine?

Affine is a decentralized reinforcement learning (RL) subnet running as Subnet 120 (SN120) on Bittensor. The project describes its mission as commoditizing reasoning, which it considers the highest form of intelligence. In practical terms, Affine pays miners who make incremental improvements to AI models on a defined set of tasks such as program abduction and coding.

The core idea is straightforward. Reinforcement learning requires enormous amounts of compute and iteration to improve model performance on complex tasks. Traditionally, only well-funded labs can afford to run these training loops at scale. Affine distributes that work across a global, permissionless network of participants. Anyone can download the current best-performing model, improve it, and submit the improved version for evaluation. If the improvement is genuine, the contributor earns rewards.

What sets Affine apart from other Bittensor subnets is its role as a higher-order coordinator within the ecosystem. Rather than specializing in a single task like inference or data collection, Affine (SN120) bridges multiple subnets together. The RL competition produces improved models that Affine hosts on Subnet 64 (Chutes), making them publicly available for inference. As a result, advances from Affine’s incentive mechanism flow immediately into the broader Bittensor network.

Affine (SN120) has rapidly become one of the highest-ranked subnets by emissions on the Bittensor network. It has held positions in the top 3 by emission share, at times claiming the number one spot before Chutes (SN64) took the lead. Bittensor co-founder Jacob Steeves has publicly described Affine as one of the largest subnets on the network and one of its most competitive mechanisms.

How Affine works?

Affine uses a winner-take-all incentive mechanism built around the concept of the Pareto frontier. In multi-objective optimization, the Pareto frontier represents the set of solutions where no single solution can be improved in one area without getting worse in another. In Affine’s context, validators evaluate submitted models across multiple RL environments simultaneously. The model that dominates the Pareto frontier, meaning it outperforms all other models across all environments, earns 100% of subnet emissions.

The evaluation process begins when a miner submits an improved model. Unlike many subnets where miners run compute locally, Affine leverages Chutes (SN64) as its model hosting and inference layer. When a miner fine-tunes a model they believe is better than the current leader, they deploy it to Chutes. From there, the model becomes publicly available through Chutes’ API for load-balanced inference. Validators then pull the deployed model and run it against a suite of standardized RL environments packaged as Docker images.

The team specifically designed the mechanism to resist common attack vectors. Sybil-proofing prevents miners from gaining an advantage by deploying multiple fake identities. Decoy-proofing stops them from cheating by packing models into specific environments. Copy-proofing blocks anyone from simply stealing the top model and resubmitting it without improvement. Finally, overfitting-proofing ensures that no model can win by performing well on a single benchmark while failing on others.

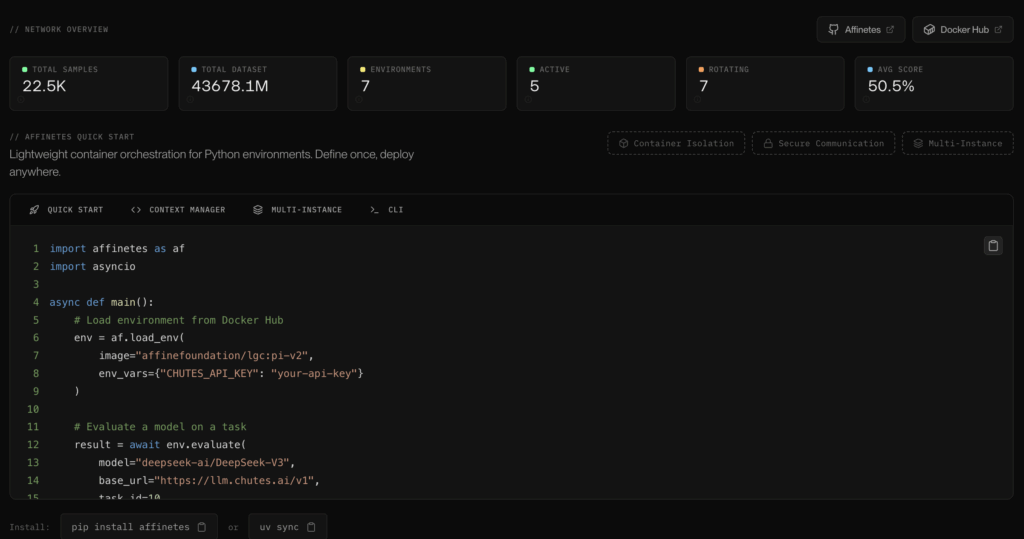

A critical design choice makes all models open source. When a model wins, it becomes the new public baseline. Other miners then download it, study it, and improve upon it. This creates a compounding cycle of improvement where each generation of models builds on the previous one. To manage evaluation environments, the project developed a lightweight container orchestration system called Affinetes, supporting both local and remote Docker deployments with environment caching for improved performance.

The current RL environments include tasks labeled as DED-V2 and ABD-V2, among others. Miners can use the Affine SDK to evaluate their models before submission, and the af CLI tool provides commands for querying mining status, pulling models from the network, and deploying to Chutes.

Who is behind it?

Jacob Steeves (also known as Const), a co-founder of the Bittensor project itself, founded Affine. Const brought the vision of applying crypto-economic incentives directly to reinforcement learning, creating what the project describes as “directed incentives for RL” that no one had achieved before.

The project has strong participation from the Chinese AI developer community. In an interview with ChainCatcher, Steeves highlighted Affine as one of the largest subnets built by Chinese developers, describing the engineering talent involved as “genuinely very high, almost unparalleled.” This international composition reflects Bittensor’s broader goal of enabling open, permissionless contribution from anywhere in the world.

Affine is actively hiring for specialized roles including Research Engineer (ML/RL), Protocol Engineer (Incentive Design & Validation), and Senior Software Architect. This hiring focus signals a continued emphasis on deep RL expertise and robust mechanism design. All code is published on GitHub, and the team maintains an active community on Discord and X: @affine_io. A live dashboard tracking network activity is available at affine.io.

Why it is valuable?

Affine addresses a fundamental bottleneck in AI development. Reinforcement learning is the technique that enables models to reason, plan, and improve through trial and error. It powers many of the most advanced capabilities in modern AI systems. However, RL training is computationally expensive and difficult to coordinate at scale. By creating a decentralized market for RL improvements, Affine turns this challenge into an open competition where the best work rises to the top regardless of who produces it.

The mechanism’s resistance to gaming is a key differentiator. In many decentralized systems, participants find ways to exploit reward mechanisms without contributing real value. Affine’s four-layer protection against sybil attacks, decoys, copying, and overfitting means that only genuine model improvements earn rewards. This makes the system more efficient and ensures that the models it produces are actually getting better over time.

The integration with Chutes (SN64) creates immediate practical value. Affine (SN120) automatically deploys every winning model for public inference on Chutes. This means that improvements in reasoning capability flow directly into real-world applications without any additional deployment step. Other subnets and external developers can access these models through Chutes’ API, creating a direct pipeline from RL research to production use.

Affine’s position within the Bittensor ecosystem is also structurally significant. It acts as connective infrastructure between subnets, ensuring that developers can compose and use advances from one area across the entire network. Without this kind of coordination layer, the ecosystem risks fragmentation where individual subnets develop in isolation. Affine (SN120) prevents that by making the best models available network-wide and directing competitive pressure toward the tasks that matter most.

Sources:

https://github.com/AffineFoundation/affine-cortex

https://www.affine.io