Your Simple Guide to Gradients (SN56)

Fine-tuning an AI model to fit a specific business need typically requires a team of machine learning engineers and a budget that starts in the tens of thousands of dollars. Services like Google Cloud Vertex AI charge over $10,000 for a single 70B model fine-tuning session. Most businesses simply cannot afford that. Gradients (SN56) solves this by turning AI model training into a competitive marketplace on Bittensor, where multiple miners race to produce the best result for a fraction of the cost.

What is Gradients?

Gradients runs as Subnet 56 (SN56) on Bittensor. The full name of the project is Gradients on Demand (G.O.D), and it operates as a competitive AutoML platform. AutoML stands for Automated Machine Learning, a category of tools that handle the complex decisions behind model training automatically. Gradients takes this concept further by distributing the work across a decentralized network of competing miners.

The platform allows anyone to fine-tune AI models without writing code. Users upload a dataset, select a model, and Gradients (SN56) assigns the task to multiple miners who independently search for the best training configuration. Instead of relying on a single predetermined strategy like traditional AutoML services, Gradients pools multiple approaches simultaneously. As a result, the final model often outperforms what any single method could achieve.

Gradients (SN56) supports four types of fine-tuning. Instruct fine-tuning teaches models to follow specific instructions and respond in a desired style. DPO (Direct Preference Optimization) trains models to prefer certain response patterns by showing them pairs of preferred and rejected answers. GRPO (Group Relative Policy Optimization) teaches models to maximize specific goals through reward functions. And Diffusion fine-tuning handles image models, training them to generate visuals in specific styles or subjects.

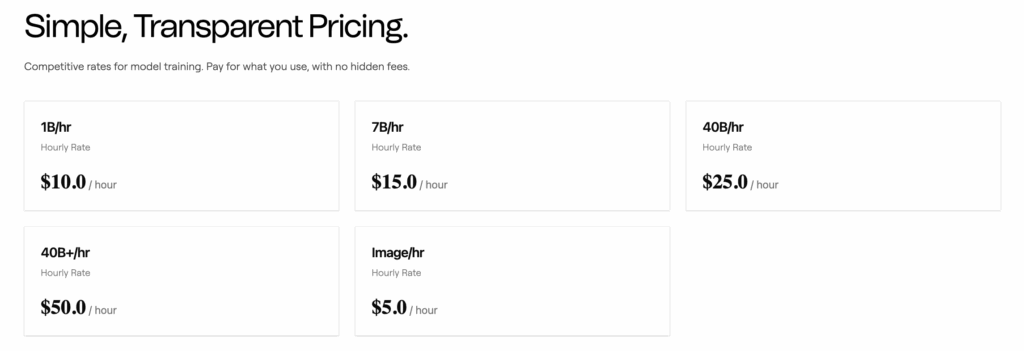

The platform offers competitive pricing across all model sizes. Smaller models cost around $100 to fine-tune, 20B parameter models around $250, and 70B models around $500. These figures compare directly against Google Cloud’s pricing of over $10,000 for equivalent work on their Vertex AI platform.

How Gradients works?

Gradients (SN56) introduced a tournament-based incentive mechanism in July 2025 that fundamentally changed how miners compete. Instead of miners training models on their own hardware and returning finished results, they now submit links to their open-source training scripts. The actual fine-tuning runs on the main validator’s compute in isolated, secure environments with no internet access. This approach resolves privacy concerns that existed in the earlier version of the mechanism.

Each tournament lasts approximately one week, with roughly a one-day break between competitions to prepare for the next round. Up to 32 miners participate in each tournament, selected based on their alpha token stakes in Gradients SN56. Validators execute all submitted scripts on identical infrastructure, ensuring a fair comparison. The system evaluates results using held-out test data that miners never access during training, measuring loss scores to determine which approach produced the strongest model.

The tournament structure rewards top performers with exponentially higher weight potential. When a tournament concludes, the winning AutoML script becomes open source and gets uploaded to the gradients-opensource repository on GitHub. This open-source release creates a growing library of proven training approaches that benefit the entire community.

For end users, the experience stays simple. The gradients.io platform provides a four-click interface where anyone can select a model from Hugging Face, upload their dataset, configure basic preferences, and launch a training job. Users can monitor progress through Weights & Biases integration and deploy finished models directly to Hugging Face. An API also supports programmatic access for developers who prefer to integrate training into their own workflows.

Behind the scenes, Gradients (SN56) handles all the complexity that typically requires specialized ML engineering knowledge. The platform automatically manages hyperparameter optimization, learning rate scheduling, data preprocessing, and evaluation. Miners compete on their ability to discover novel training configurations that the standard approaches miss.

Who is behind it?

Rayon Labs built and operates Gradients SN56. The company develops multiple subnets on the Bittensor network and has established a strong reputation within the ecosystem. In addition to Gradients, Rayon Labs operates Chutes (SN64), the leading serverless AI inference subnet that became the first Bittensor subnet to reach a $100 million market capitalization.

A decentralized team of engineers founded Gradients, originally led by WanderingWeights (Chris). Today, Besim and WanderingWeights jointly lead the project. WanderingWeights has appeared on multiple podcast interviews including Revenue Search and Bittensor Guru, discussing the technical architecture and business strategy behind the platform. The team behind Rayon Labs operates as a small, globally distributed group of engineers working across multiple subnets.

Rayon Labs subnets collectively account for over a quarter of total emissions on the Bittensor network, according to community analysts. This dominance reflects the practical utility and consistent delivery that the team has demonstrated across their product lineup. Messari, the crypto research firm, published a dedicated report on Rayon Labs highlighting the synergy between their subnets.

Why it is valuable?

Gradients (SN56) has produced benchmark results that directly challenge established AutoML providers. The team conducted 180 controlled experiments comparing their platform against HuggingFace AutoTrain, TogetherAI, Google Cloud Vertex AI, and Databricks. Performance measurements used loss scores on held-out test data that miners never accessed during training.

The results showed that Gradients achieved an 82.8% win rate against HuggingFace AutoTrain and 100% win rates against TogetherAI, Databricks, and Google Cloud. Gradients performed strongest on RAG tasks (44.4% above median) and translation (36.8% above median). HuggingFace showed comparable performance on reasoning tasks but struggled significantly with code generation. TogetherAI consistently performed below median across all task categories.

These results demonstrate why competitive, multi-miner training produces better outcomes than single-strategy approaches. When multiple miners independently search for optimal configurations, they explore a wider space of possibilities. Traditional platforms use one predetermined approach. Gradients pools dozens of independent strategies, increasing the chances of finding configurations that standard methods consistently miss.

The platform has already attracted over 3,000 paying users, primarily hobbyists and smaller organizations that previously could not afford professional model fine-tuning. All fiat revenue generated through the platform goes toward buying and locking up the Gradients alpha token, creating a direct economic link between platform usage and token value.

Beyond individual users, the training pipeline has processed over 2 billion rows of data and trained across 118 trillion parameters since launch. These numbers reflect real usage at scale, not theoretical benchmarks. The platform delivers results significantly cheaper and often better than the major incumbents, making professional-grade AI training accessible to a much wider audience.

The future of Gradients

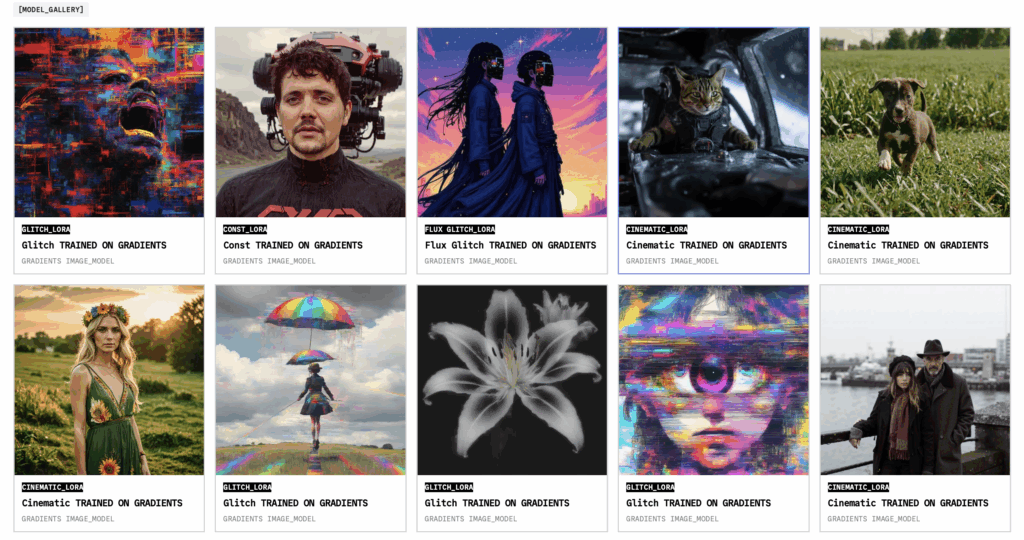

Gradients (SN56) continues expanding its training capabilities across multiple fronts. The team launched full-featured image model training tools with advanced preprocessing pipelines and specialized infrastructure optimized for diffusion models. This expansion brings the same competitive, multi-miner approach to visual AI that previously only applied to text models.

The next major milestone involves introducing large-scale pretraining for LLMs, enabling organizations to build foundational models from scratch through the decentralized network. This moves Gradients beyond fine-tuning into the more ambitious territory of training entirely new base models.

Enterprise adoption represents a key focus area. The tournament mechanism already addresses a major corporate concern by running all training on validator infrastructure with no internet access, which means proprietary datasets never leave a controlled environment. The team plans to further strengthen enterprise capabilities through integration with Trusted Execution Environments available on Chutes, providing an additional layer of data privacy and security.

The synergy with other Rayon Labs subnets creates a full-stack AI pipeline. Users can fine-tune models through Gradients (SN56) and host them for inference on Chutes (SN64). This integrated approach gives Rayon Labs a significant structural advantage, as improvements in one subnet directly benefit the others.

Sources:

https://github.com/gradients-ai/G.O.D

https://www.gradients.io