Jensen Huang Calls Bittensor “Modern Version of Folding@home”

NVIDIA CEO Jensen Huang publicly acknowledged Bittensor during a live conversation with Chamath Palihapitiya on the All-In Podcast at GTC 2026. The exchange took place on the show floor of NVIDIA’s annual GPU Technology Conference in San Jose, California, on March 18, 2026. When Chamath described a decentralized AI training run on Templar, Bittensor Subnet 3, Jensen responded with a comparison that instantly framed the network for a mainstream audience: “Our modern version of Folding at Home.”

This marks the first time the CEO of the world’s most valuable semiconductor company has referenced Bittensor by name in a major public forum. The market reacted within hours. $TAO surged over 17%, trading volume nearly doubled overnight, and the Opentensor Foundation posted a correction clarifying the true scale of the achievement Chamath had described.

The Conversation That Put Bittensor on the Map

The moment came during a segment on the future of open-source AI. Chamath Palihapitiya, billionaire investor and one of four hosts of the All-In Podcast, turned the conversation toward decentralized model training. Here is what he said:

Two days ago, you may not have seen this because you were busy on stage, but there was a training run that happened in this crypto project called Bittensor Subnet 3. They managed to train a 4 billion parameter LLaMA model, totally distributed, with a bunch of people contributing excess compute, but they were able to do it statefully and manage a training run, which I thought was a pretty crazy technical accomplishment. Because it’s like random people and each person gets a little share.

Jensen Huang recognized the concept immediately and drew a parallel to one of the most famous distributed computing projects in history:

Our modern version of Folding@home.

Folding@home launched in 2000 at Stanford University. The project united millions of personal computers worldwide to simulate protein folding, contributing to breakthroughs in disease research. At its peak during the COVID-19 pandemic, Folding@Home became the world’s most powerful computing system, surpassing the combined output of the top 500 supercomputers. By comparing Bittensor to Folding@Home, Jensen placed decentralized AI training in a lineage that mainstream tech audiences already understand and respect.

Chamath then pushed the discussion further, asking about the long-term implications of decentralized compute for open-source AI:

What do you think about the end state of open source? Do you see this decentralization of architecture as well and decentralization of compute to support open weights and a totally open-source approach to making sure AI is broadly available to everyone?

Jensen’s response laid out a clear framework for how he sees the AI landscape evolving:

I believe we fundamentally need models as a first class product, proprietary product, as well as models as open source. These two things are not A or B, it’s A and B. There’s no question about it. And the reason for that is because models is a technology, not a product.

That distinction matters. Jensen did not frame open-source AI as a competitor to proprietary systems. He framed it as a complementary force, positioning decentralized projects like Bittensor as part of the same broader AI ecosystem that NVIDIA’s hardware powers.

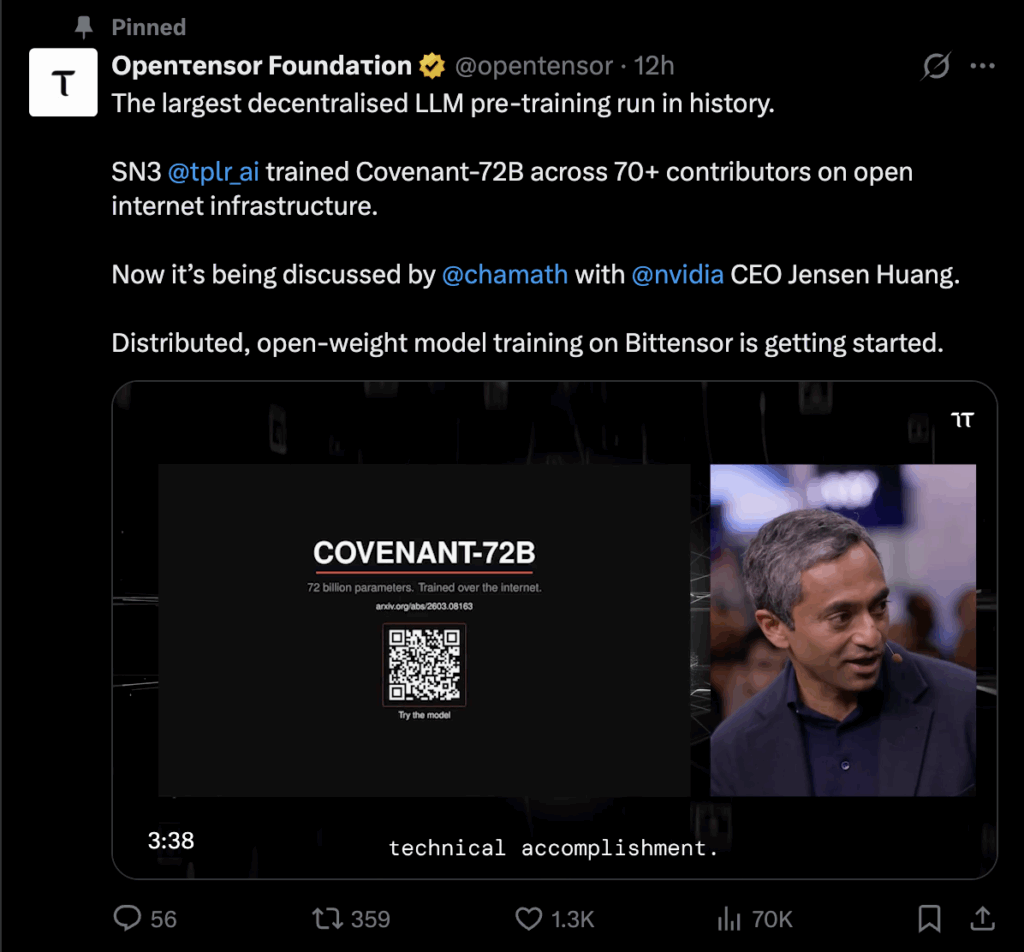

The Correction: Covenant-72B, Not 4 Billion Parameters

There is one factual detail that Chamath got wrong on air. He described the training run as producing a “4 billion parameter LLaMA model.” The actual model is significantly larger.

On March 19, 2026, the Opentensor Foundation posted a correction on X:

The largest decentralised LLM pre-training run in history. SN3 @tplr_ai trained Covenant-72B across 70+ contributors on open internet infrastructure. Now it’s being discussed by @chamath with @nvidia CEO Jensen Huang.

The model in question is Covenant-72B, a 72-billion-parameter large language model pre-trained entirely on Bittensor Subnet 3 (Templar). That makes the real achievement 18 times larger than what Chamath described on air. The Templar team also confirmed the correction on their own X account.

What Is Covenant-72B

Covenant-72B represents the largest decentralized LLM pre-training run ever completed. The training finished on March 10, 2026, eight days before the All-In Podcast conversation at GTC.

Covenant-72B has 72 billion parameters and was trained on approximately 1.1 trillion tokens of general internet data. Over 70 independent contributors provided GPU compute throughout the run. No centralized data center or whitelist was involved. Anyone with the minimum hardware requirement could join or leave freely at any time.

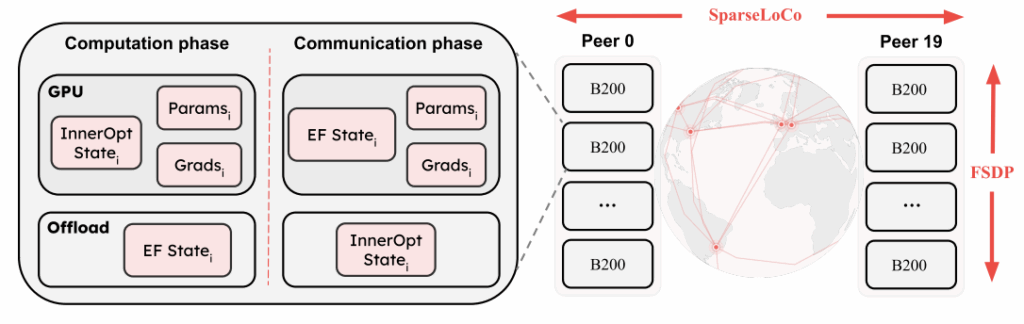

The key technical breakthrough behind the run is SparseLoCo, an optimizer that compresses communication payloads by over 146x compared to standard gradient synchronization. This allowed participants to maintain 94.5% compute utilization even on regular home internet connections. On benchmarks, Covenant-72B scored 67.1 on MMLU (zero-shot), surpassing centralized baselines including LLaMA-2-70B (65.6) and LLM360 K2 (65.5) under identical evaluation conditions.

All weights and checkpoints are published on Hugging Face under an Apache License. The research paper is available on arXiv (published March 9, 2026).

Before Covenant-72B, the largest decentralized training run was INTELLECT-1 by Prime Intellect at 10 billion parameters. Covenant-72B is 7.2x larger while achieving lower communication overhead per round.

For a full technical breakdown, read our analysis here.

Who Is Behind This Achievement

Templar operates Subnet 3 on the Bittensor network. The project is led by Sam Dare and has expanded into Covenant AI, an organization now running three interconnected subnets on Bittensor:

- Templar (SN3) handles decentralized pre-training. This is the subnet that produced Covenant-72B.

- Basilica (SN39) provides a trustless GPU marketplace for decentralized compute.

- Grail (SN81) focuses on post-training and reinforcement learning.

Together, these three subnets form a decentralized pipeline that covers the full AI model lifecycle. The Templar team includes researchers affiliated with Mila (the Montreal AI research institute), the Universite de Montreal, and Concordia University.

To learn more about how Templar works, read: Your Simple Guide to Templar (SN3).

What This Means for Bittensor

The Bittensor Jensen Huang moment changes the visibility equation for the entire network. Until now, Bittensor operated almost exclusively within crypto-native and decentralized AI circles. A direct acknowledgment from the CEO of NVIDIA, whose GPUs power virtually all AI training on the planet, is a different category of validation.

Several factors amplify the significance.

The Folding@Home comparison works. It instantly communicates the concept of decentralized compute to anyone who followed the protein folding project. No explanation of blockchain incentives or subnet architecture required. Jensen did the framing work in five words.

The All-In Podcast reaches the right audience. The show is hosted by four of Silicon Valley’s most prominent investors: Chamath Palihapitiya, Jason Calacanis, David Sacks, and David Friedberg. Its audience spans venture capital, Wall Street, and the broader tech industry. This is not a crypto podcast echo chamber.

The timing aligns with institutional momentum. Grayscale Investments filed an S-1 registration statement with the SEC in December 2025 to convert its Bittensor Trust into a spot ETF. The All-In endorsement lands as institutional interest in Bittensor is already building.

Jensen’s philosophical framing validates the thesis. By calling AI models “a technology, not a product” and framing open-source and proprietary approaches as complementary, he endorsed the core idea behind Bittensor: that decentralized networks can contribute meaningfully to the global AI stack.

Market Reaction

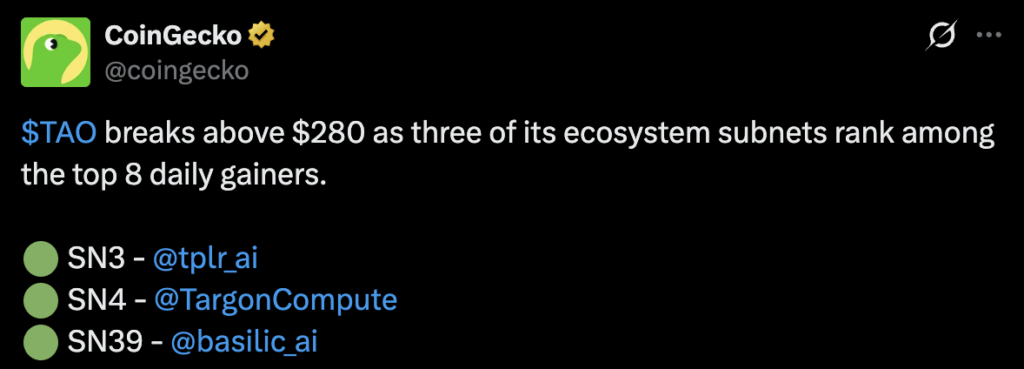

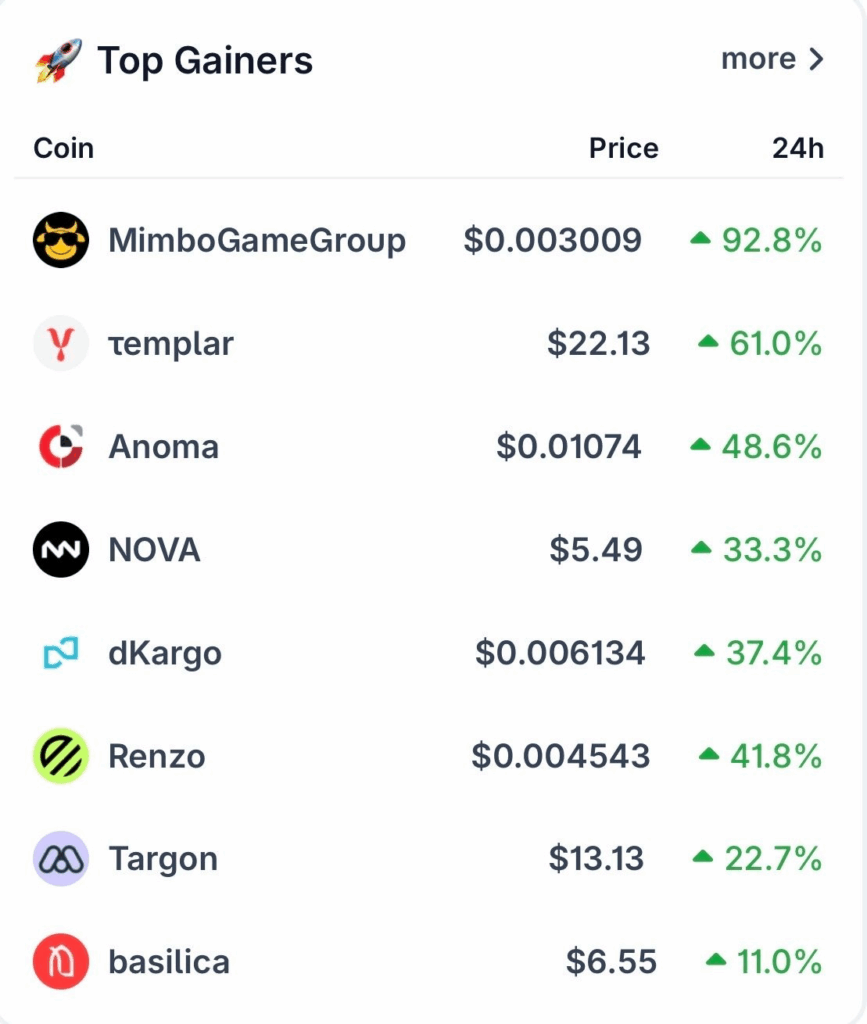

The market response was immediate and substantial. $TAO surged past $298, registering gains exceeding 17% within 24 hours of the episode airing. Daily trading volume peaked at $677 million, the highest level since November 2025. Futures open interest climbed to $361 million, nearly triple the $131 million recorded on March 4.

Three Bittensor ecosystem subnets also ranked among the top eight daily gainers on CoinGecko: SN3 Templar, SN4 Targon, and SN39 Basilica. The structural mechanics of the Bittensor network amplified the price action: investors need to hold $TAO to access subnet-specific tokens, so concentrated demand for any single subnet cascades into buying pressure on the base asset.

The Templar team’s original announcement of Covenant-72B on X had already reached 1.7 million views before the All-In episode aired. The Jensen Huang endorsement added a second wave of attention from outside the crypto ecosystem entirely.

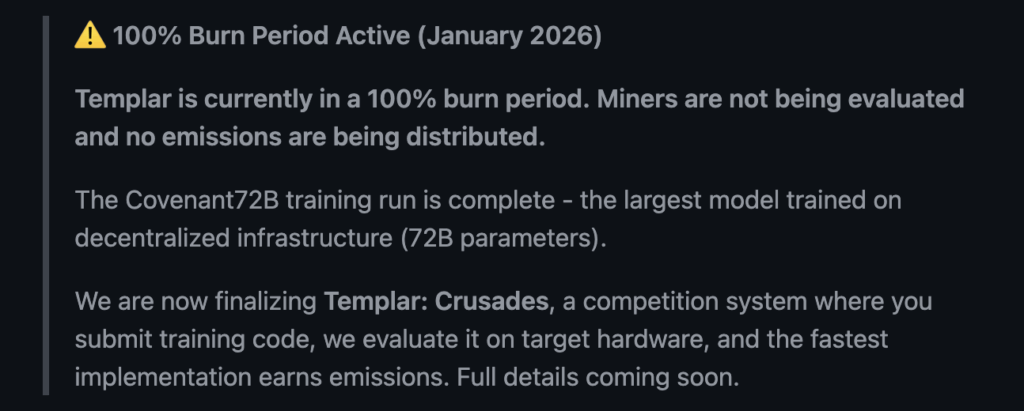

What Comes Next

The Covenant-72B training run is complete, but Templar’s roadmap continues. The next phase, called Crusades, shifts focus from coordination to optimization. Participants will submit training code, validators will benchmark implementations on target hardware, and emissions will flow to the most efficient solutions.

Meanwhile, Covenant AI is building out its vertical stack across all three subnets. The goal is a fully decentralized pipeline where pre-training, compute, and alignment all happen on the Bittensor network without centralized control.

The combination of a Jensen Huang endorsement, a record-breaking training run, and growing institutional interest through Grayscale positions Bittensor for broader adoption. Whether this momentum sustains will depend on the network’s ability to keep shipping technical results at the pace the market now expects.

The conversation at GTC may have lasted only a few minutes. But for Bittensor, it was decades of distributed computing philosophy meeting its most visible moment of mainstream recognition.