Covenant-72B Changes Everything | Weekly Bittensor Update

Big Events in the Bittensor Ecosystem

Proof of Talk Returns to the Louvre With a Dedicated Bittensor Track

Proof of Talk is coming back to the Louvre Palace in Paris on June 2-3, 2026, and Bittensor gets its own dedicated track for the second year running. Co-founders Jacob Steeves and Ala Shaabana will be in attendance alongside key builders, subnet operators, and ecosystem leaders. The event will spotlight how the network is evolving and where decentralized intelligence is heading next.

General Tensor Raises $5M to Build Bittensor Infrastructure

General Tensor, formerly known as General TAO Ventures, has closed $5M across oversubscribed pre-seed and seed rounds. The seed round was anchored by Good Morning Holdings, a fund led by Lok Lee and backed by Goldman Sachs. The pre-seed, closed back in December 2024, was led by Lvna Capital with participation from DCG, X Ventures, Proof of Talk, and Outliers Fund.

The oversubscribed nature of both rounds signals growing institutional appetite for operational exposure to decentralized AI networks. Rather than passively accumulating TAO on the open market, General Tensor takes a different approach. The company vertically integrates mining, validating, and subnet infrastructure, claiming it can generate TAO at roughly 40x the cost efficiency of direct market purchases. That margin is what makes the company attractive to institutional investors looking for production-side exposure to the Bittensor ecosystem rather than simple token speculation.

With fresh capital secured and heavyweight backers in place, General Tensor is positioning itself as a core infrastructure participant within Bittensor, focused on scalable operations and long-term value creation as the decentralized AI economy continues to expand.

Subnet Updates

Covenant-72B: The Largest Decentralized LLM Training Run in History

Templar (SN3) completed Covenant-72B, a 72-billion-parameter language model pre-trained entirely over commodity internet connections with no centralized data center and no whitelist. Over 70 unique peers contributed compute throughout the run, training the model on roughly 1.1 trillion tokens. The result scored 67.1 on MMLU (zero-shot), exceeding centralized baselines like LLaMA-2-70B (65.6) and LLM360 K2 (65.5) under identical evaluation conditions.

The key technical breakthrough is SparseLoCo, an optimizer that compresses communication payloads by over 146x compared to dense gradient synchronization. That allowed the team to maintain 94.5% compute utilization even over standard home internet connections. Trust was handled by Gauntlet, a blockchain-native validator running on Bittensor that automatically scores every contribution each round, catching free-riders and adversarial actors without manual vetting.

The model is 7.2x larger than INTELLECT-1 by Prime Intellect, yet achieves lower communication overhead per round. All weights and checkpoints are published on Hugging Face under an Apache License, and the research paper is available on arXiv. The team has since expanded into Covenant AI, operating three interconnected subnets: Templar (SN3) for pre-training, Basilica (SN39) for decentralized compute, and Grail (SN81) for reinforcement learning post-training.

Learn more about Covenant-27B.

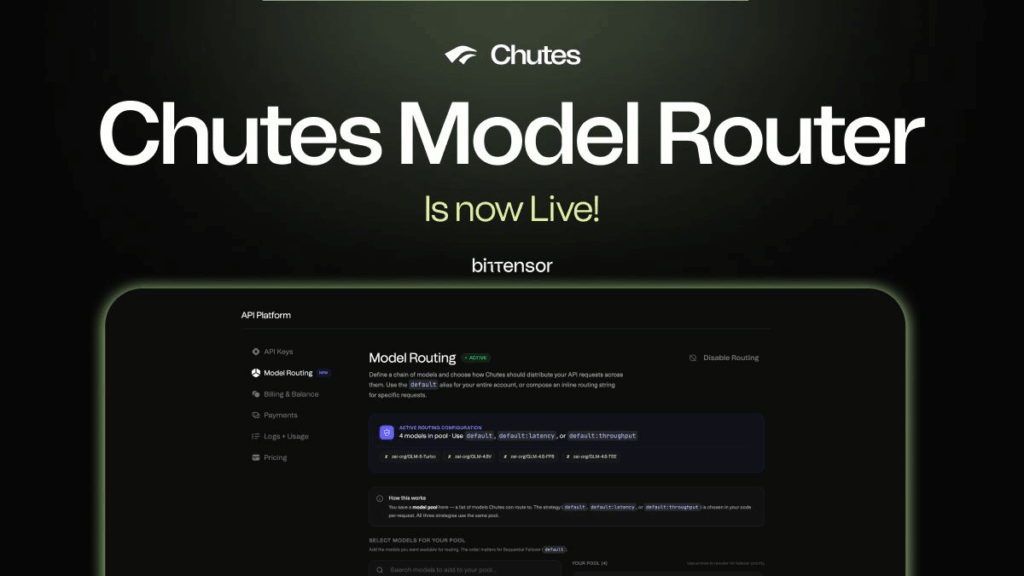

Chutes (SN64) Ships End-to-End Encryption and a New Model Router

Chutes (SN64) had a busy week with two major infrastructure releases. First, the team shipped end-to-end encryption for AI inference. The feature encrypts prompts directly on the developer’s machine and sends them to a specific GPU running inside a Trusted Execution Environment (TEE).

No one in the chain can read the data. Not the network, not Chutes itself, not the miners operating the hardware. The encryption stack uses ML-KEM 768, a NIST-standardized post-quantum key encapsulation mechanism combined with ChaCha20-Poly1305 for authenticated encryption. Every request generates a fresh ephemeral keypair for forward secrecy, meaning even future quantum computers could not decrypt captured traffic. The code is fully open source under the MIT license.

On top of that, Chutes introduced the Model Router, a system that lets developers pool up to 20 models behind a single API alias and automatically route requests across them based on live performance data. Three routing strategies are available: sequential failover (tries models in priority order), latency-optimized (picks the lowest time to first token), and throughput-optimized (selects the highest tokens per second for long-form generation). The router works with any OpenAI-compatible SDK and requires only a change to the model field. No additional code or custom logic needed.

Together, these two releases push Chutes further into production-grade territory, combining post-quantum privacy guarantees with the kind of reliability infrastructure that enterprise developers actually need.

Targon (SN4) SDK Expands Into a Full Cloud Compute Alternative

Manifold Labs is rolling out a major feature expansion for the Targon SDK on Bittensor Subnet 4. The 2026 update introduces a new CLI for streamlined deployment, full container lifecycle management with auto-scaling, and the ability to deploy any Python source code on confidential GPUs and CPUs. All GPU tiers run on NVIDIA H200 hardware, with confidential rentals starting at $1.90 per hour through the Targon Virtual Machine (TVM), which uses Intel TDX and AMD SEV technologies for hardware-level isolation.

The SDK now supports both ephemeral sessions for testing and persistent deployments for production services. Developers can configure minimum and maximum replicas per function, and the system auto-scales based on demand. Pricing is efficiency-based, meaning teams only pay for compute they actually use. No charges for idle infrastructure.

This positions Targon (SN4) in direct competition with centralized serverless platforms, but with cryptographic security guarantees that most cloud providers simply do not offer. Manifold Labs raised a $10.5M Series A led by OSS Capital in July 2025, and the team includes Rob Myers, a founding Bittensor contributor, alongside co-founder James Woodman, formerly COO of the Bittensor Foundation.

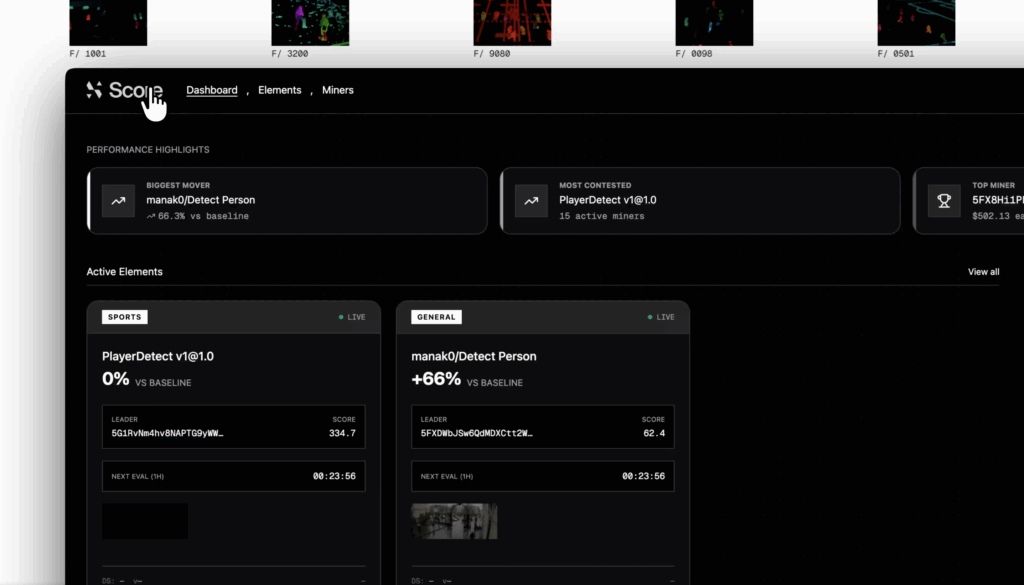

Score (SN44) Drops a New Console and Prepares Private Track Testing

Score (SN44) launched its new Programmable Vision AI console this week. The subnet is ramping up quickly with new tasks and the first private track live tests scheduled for next week. More tasks mean more emissions for miners, expanding the incentive structure as the platform scales. Score continues to build its computer vision stack methodically, adding capability layer by layer.

TAO Market Update

Price: $246.10-$291.36

Weekly: +45.81%

Ranking: #30

Market Cap: $3.09B

24h Volume: $689M

Top Gainer Subnet: Beam (SN105)

Beam (SN105) is a decentralized bandwidth coordination network that enables data transfer across distributed infrastructure. Through Proof-of-Bandwidth, the network verifies real delivery and aligns incentives with measurable performance. Beam supports today’s internet workloads while enabling the growing demand for data exchange between autonomous systems and AI agents.

Weekly change: +109.85%

Price: $2.703403

Market Cap: $2.35M

Volume (24h): $596.85K

FAQ:

Covenant-72B is a 72-billion-parameter language model pre-trained entirely over commodity internet connections by the Templar team on Bittensor Subnet 3. It is the largest decentralized LLM training run in history, with over 70 permissionless peers contributing compute and all weights released under an Apache License.

TAO rallied over 45% driven by two major catalysts. First, the completion of Covenant-72B proved that Bittensor can train enterprise-scale AI models without centralized infrastructure. Second, the Grayscale Bittensor Trust became an SEC-reporting vehicle on March 14, removing a key compliance barrier for institutional capital.

General Tensor, formerly General TAO Ventures, is a Bittensor infrastructure company that vertically integrates mining, validating, and subnet operations. The company raised $5M in oversubscribed rounds backed by Good Morning Holdings (Goldman Sachs), DCG, Lvna Capital, and others.

The Chutes Model Router is a new feature on Subnet 64 that lets developers pool up to 20 AI models behind a single API alias. It automatically routes requests based on live performance data using three strategies: sequential failover, latency-optimized, and throughput-optimized.

Proof of Talk returns to the Louvre Palace in Paris on June 2-3, 2026, featuring a dedicated Bittensor track for the second year running. Co-founders Jacob Steeves and Ala Shaabana will be in attendance.